Inside ext4 Journaling and fsync: Why Databases Stall

Last updated: May 10, 2026

Databases stall on ext4 fsync() because the call can turn into a wait graph: dirty file data, delayed allocation, an ext4/JBD2 journal commit, and a block-device cache flush may all sit between a WAL commit and the client response. The key caveat is safety. Latency stalls are not the same thing as EIO writeback failures. Treat ext4 fsync database stalls as a coupling problem first, then prove which wait edge is hot.

Quick nav: The Stall Is a Wait Graph, Not a Slow System Call · Where ext4 Couples One Commit to Other Dirty Work · Why WAL Commits and Checkpoints Stall Differently · The Three Latency Sources Hidden Behind fsync() · Fast Commits Help Metadata, Not Every Database Stall · The Fsyncgate EIO Trap Is a Different Problem · How to Prove ext4 Is the Bottleneck on a Live Host · What Actually Moves the p99 Without Lying About Durability · References · Related reading

- ext4’s default

data=orderedmode journals metadata, while forcing relevant data blocks out before the metadata commit is durable. - PostgreSQL on Linux normally uses

fdatasyncfor WAL durability, but that still enters the filesystem and block flush path. - Fast commits reduce some ext4 metadata commit cost, but they fall back to full JBD2 commits and do not remove data writeback or hardware flush waits.

- Since Linux 4.13, writeback

EIOreporting is better, but anEIOis a data-safety event, not a normal p99 latency spike.

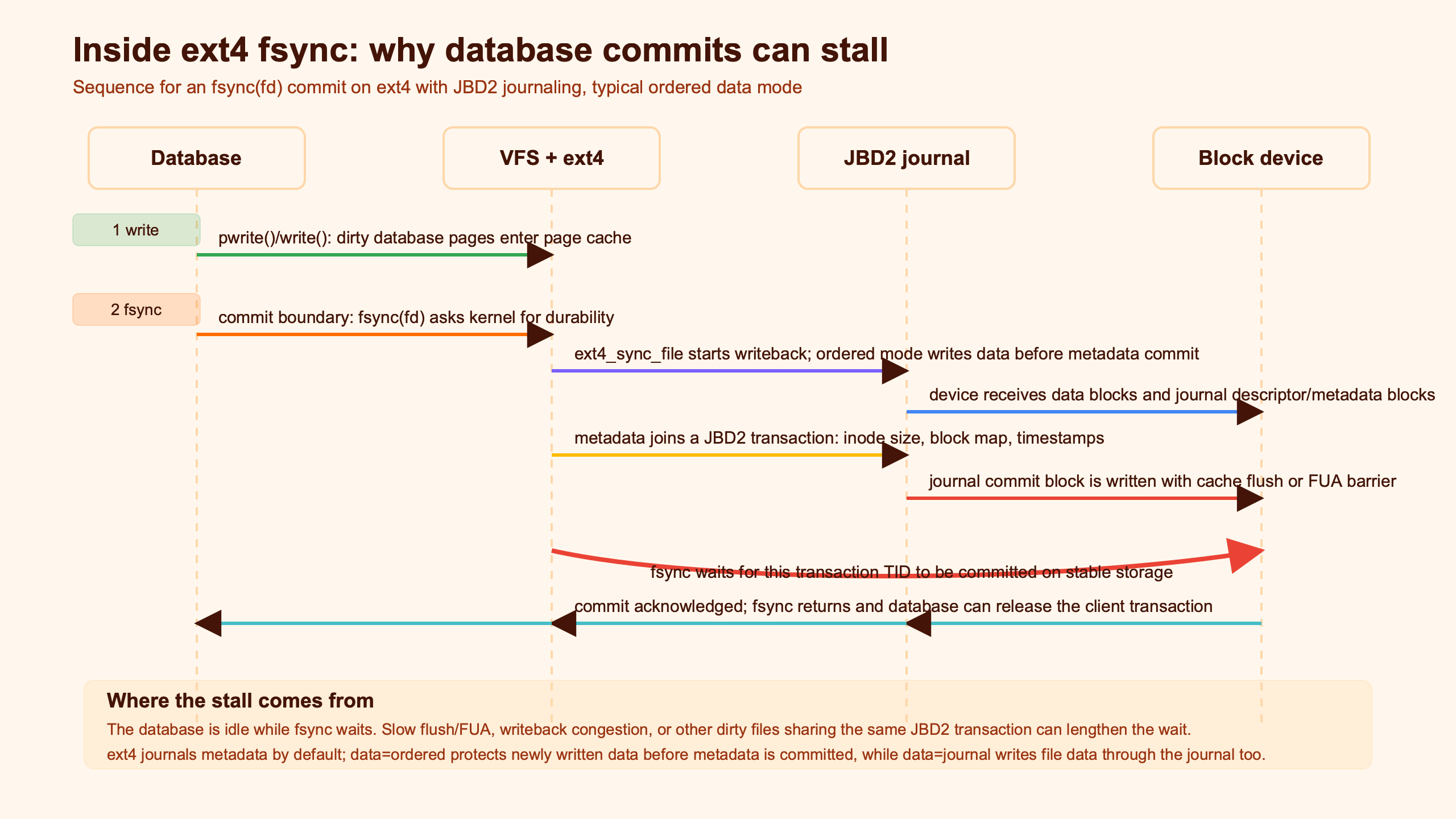

The Stall Is a Wait Graph, Not a Slow System Call

A database commit does not wait on one isolated thing called “fsync.” It waits on a chain. The database asks the kernel for durability, ext4 prepares dirty ranges and metadata, JBD2 commits a transaction, and the block layer may issue a flush or FUA write so volatile device-cache ordering is honored.

The Linux fsync(2) manual page says the call flushes modified file data and associated metadata, including disk cache flushes when present. ext4 then adds its own journaling contract. The Linux kernel JBD2 documentation describes the journal commit record as the point that makes a transaction replayable after a crash.

For a database, that means a tiny logical action can wait behind physical work that is not tiny. A PostgreSQL transaction may append a small WAL record, call fdatasync(), and then wait because the filesystem transaction carrying that metadata has not committed yet. SQLite with PRAGMA synchronous=FULL can hit the same shape through its VFS xSync path, even though the database file format and commit protocol are different.

The diagram’s useful idea is the fan-in: one database durability call points to several queues, not a single disk write. If p99 latency rises, the next question is not “is fsync slow?” It is “which edge of the wait graph is slow: data writeback, JBD2 commit, checkpoint pressure, or the storage flush?”

Where ext4 Couples One Commit to Other Dirty Work

ext4 couples work through delayed allocation, ordered data mode, and shared journal transactions. The surprise is that your database may wait for blocks that are not part of the user transaction the application just committed.

The ext4 admin guide documents the important default: in data=ordered, data blocks are forced to the main filesystem before their metadata is committed to the journal. This is not full data journaling. It is an ordering rule that prevents stale block exposure after a crash, but it also means metadata durability can depend on data writeback finishing first.

Delayed allocation makes the coupling easy to miss. A buffered write can sit in page cache without final block allocation. When writeback finally happens, ext4 chooses blocks, updates extent metadata, and ties that metadata to a JBD2 transaction. If a database WAL file, a log shipper, and a background bulk writer all dirty the same ext4 filesystem during the same commit window, they can meet at the journal even if their application-level data is unrelated.

This is the case many search results skip. A small fsync() on a database log can be delayed by a larger filesystem transaction already in flight. The 2017 USENIX ATC paper iJournaling: Fine-Grained Journaling for Improving the Latency of Fsync System Call names the same core issue: compound journaling can include irrelevant data and metadata in the transaction path for an fsynced file.

# Reproduction scaffold for a Linux host with ext4 mounted at /mnt/ext4.

# Run the database workload and the dirty writer on the same filesystem,

# then compare with WAL moved to a separate filesystem or device.

pgbench -N -c 32 -j 8 -T 300 postgres

fio --name=dirty \

--rw=write \

--bs=1m \

--size=8g \

--directory=/mnt/ext4 \

--iodepth=1 \

--direct=0The important control is not the raw throughput from fio. It is whether the database commit p99 rises only when the dirty writer shares the ext4 journal and block-device flush path. If moving WAL to another device cuts the p99 tail while average CPU use barely changes, the stall was probably filesystem or storage coupling, not query planning.

Why WAL Commits and Checkpoints Stall Differently

WAL commits and checkpoints both call sync primitives, but they stress different parts of ext4. WAL commit latency is usually a small, frequent durability wait. Checkpoint latency is broader: many dirty database pages reach the filesystem, then the database later waits for those pages to become durable.

PostgreSQL’s WAL configuration docs state that fsync and related methods are used so the cluster can recover consistently after an operating-system or hardware crash. The same page lists wal_sync_method choices such as fdatasync, fsync, open_datasync, and open_sync, with fdatasync the default on Linux and FreeBSD.

That distinction matters when reading graphs. A WAL commit stall often appears as client-visible commit latency: transactions pause at commit time, but query execution may look normal. A checkpoint stall often appears as a wider I/O pressure event: background writer activity rises, block-device await rises, and later commit calls may suffer because the filesystem and device are already busy draining older dirty data.

SQLite exposes the tradeoff more directly. The SQLite synchronous pragma docs say FULL uses the VFS sync method to make content reach stable storage before continuing. In WAL mode, NORMAL syncs less often and may lose durability across power loss, even though transactions remain consistent. That is why switching SQLite from FULL to NORMAL can make stalls disappear while changing the crash contract.

The Three Latency Sources Hidden Behind fsync()

Most ext4 fsync database stalls reduce to three wait sources: data writeback, journal commit, and hardware flush. They can stack, so a single p99 event may include all three.

| Wait source | What the database sees | Linux evidence | Durable fix |

|---|---|---|---|

| Data writeback before ordered metadata commit | Small WAL or SQLite commit waits while bulk dirty pages drain | High write await, dirty pages rising, ext4 sync time larger during background writers | Separate WAL or database log I/O, lower dirty limits, reduce checkpoint burst size |

| JBD2 transaction commit | Many tasks briefly wait on jbd2/* or jbd2_log_wait_commit |

jbd2_start_commit to jbd2_end_commit spans match p99 commit spikes |

Batch commits, enable fast commits where suitable, avoid unrelated metadata churn on the same filesystem |

| Block-device flush or FUA completion | Latency follows storage cache flush cost more than write size | Flush requests show long completion time; iostat -x await and %util spike |

Use storage with power-loss protection, reduce sync frequency safely, split write-ahead log from noisy data writes |

| Checkpoint pressure | Commit p99 rises after large dirty-page bursts, not necessarily at steady state | Database checkpoint counters and kernel dirty writeback move together | Smooth checkpoints, size WAL and buffers for steadier writeback, avoid sharing the same journal with bulk jobs |

The hardware flush piece is where unsafe advice often enters. ext4 mount options allow barriers to be disabled, and the ext4 admin guide says barriers enforce proper ordering of journal commits when volatile disk write caches are present. Disabling them can reduce latency on some stacks, but it trades away an ordering guarantee unless the storage stack has real power-loss protection and the full path is known.

Fast Commits Help Metadata, Not Every Database Stall

ext4 fast commits reduce part of the journal path by recording compact metadata deltas for eligible operations. They do not remove the need to write database data, wait for dirty pages, or complete storage flushes.

The kernel’s JBD2 documentation says fast commits in data=ordered store the minimal delta needed to recreate affected metadata in a fast-commit area shared with JBD2. It also states the fallback rule: when the fast-commit area fills, when a fast commit is not possible, or when the regular commit timer fires, ext4 performs a traditional full commit.

That fallback rule is the database caveat. If the stall is mostly metadata commit overhead, fast commits can help. If the p99 is dominated by data writeback from checkpoints or by a slow NVMe flush, the fast-commit path is not the main wait. The database still needs the WAL record durable, and the device still has to report completion.

# Check whether a filesystem was created with fast_commit support.

sudo tune2fs -l /dev/nvme0n1p2 | grep 'Filesystem features'

# On systems exposing ext4 fast-commit stats, inspect hit and fallback counts.

cat /proc/fs/ext4/nvme0n1p2/fc_infoThe practical reading is straightforward: fast commits are worth measuring for fsync-heavy metadata workloads and small-file workloads. For database stalls, pair the fast-commit counters with JBD2 tracepoints and block flush latency. If fallbacks rise during checkpoints, or if block flush completion time is already the p99, fast commits are not the whole answer.

The Fsyncgate EIO Trap Is a Different Problem

An fsync() latency spike means the durability wait took too long. An fsync() returning EIO means the kernel is reporting a synchronization failure. Those belong in different incident runbooks.

The Linux fsync(2) manual says EIO can relate to data written through another file descriptor for the same file, and that since Linux 4.13 writeback errors are reported to file descriptors that might have written the failed data. That improvement does not turn a failed fsync() into a retry loop. A database that has already lost a writeback error may not be able to prove which durable state exists on disk.

This is why “turn off fsync” is not a performance fix for production data. PostgreSQL’s own documentation says disabling fsync can yield a performance benefit, but can also cause unrecoverable corruption after power loss or system crash, and should be limited to cases where the entire database can be recreated from outside data.

Keep the taxonomy clean:

- Normal latency stall:

fsync()succeeds, but took 80 ms, 400 ms, or seconds because it waited behind writeback, JBD2, or flush work. - Checkpoint-induced stall: sync latency rises because the database and kernel are draining a large writeback wave.

- EIO safety failure:

fsync()returns an error; the database must treat that as possible durability loss, not as a slow commit.

How to Prove ext4 Is the Bottleneck on a Live Host

Prove ext4 with time alignment. Capture database commit latency, ext4 sync events, JBD2 commit events, block completion, and device await in the same window. Average query latency is too far away from the filesystem path.

A useful trace does not need a synthetic benchmark table unless the environment is fully reproducible. Record the Linux kernel version, ext4 mount options, filesystem features, storage model or cloud volume type, database version, workload command, and trace window. Without those details, raw p50 and p99 numbers are usually less useful than the timeline that shows what the database was waiting for.

sudo trace-cmd record \

-e ext4:ext4_sync_file_enter \

-e ext4:ext4_sync_file_exit \

-e jbd2:jbd2_start_commit \

-e jbd2:jbd2_end_commit \

-e block:block_rq_issue \

-e block:block_rq_complete \

-- sleep 300

trace-cmd report | lessThe trace-cmd manual describes it as a front end to Linux ftrace. For this investigation, the useful output is the timestamp gap: did the slow database commit overlap a JBD2 transaction, a block flush completion, or a long data writeback phase?

sudo bpftrace -e '

kprobe:ext4_sync_file { @ext4_start[tid] = nsecs; }

kretprobe:ext4_sync_file /@ext4_start[tid]/ {

@ext4_sync_ms = hist((nsecs - @ext4_start[tid]) / 1000000);

delete(@ext4_start[tid]);

}

kprobe:jbd2_log_wait_commit { @jbd2_start[tid] = nsecs; }

kretprobe:jbd2_log_wait_commit /@jbd2_start[tid]/ {

@jbd2_wait_ms = hist((nsecs - @jbd2_start[tid]) / 1000000);

delete(@jbd2_start[tid]);

}'Pair that with device stats and database counters:

iostat -x 1

grep -E 'Dirty|Writeback' /proc/meminfo

ps -eo state,wchan:32,comm | grep -E 'jbd2|fsync|postgres|mysqld|sqlite'For PostgreSQL, compare commit-latency histograms with checkpoint timing and WAL sync timing. For SQLite, compare transaction latency under synchronous=FULL, NORMAL, and WAL checkpoint activity. The goal is not to prove ext4 is “bad.” The goal is to decide whether the p99 lives in the filesystem wait graph or above it.

An ext4/JBD2/block-layer trace visual should show aligned timestamps for the database commit, ext4_sync_file, jbd2_start_commit, jbd2_end_commit, and block request issue/complete events. The strongest evidence is not a standalone chart; it is a matched timeline where a slow commit lines up with a journal wait, a flush wait, or a dirty-writeback wave.

What Actually Moves the p99 Without Lying About Durability

The best fixes reduce coupling or reduce sync frequency under an explicit durability contract. The worst fixes hide the wait by weakening crash guarantees without naming the loss mode.

Start with batching. PostgreSQL already benefits from group commit when concurrent sessions commit near each other. Application-side batching, fewer single-row commits, and controlled asynchronous commit for data that can be replayed from a queue can cut sync frequency. This is for event streams, caches, derived tables, and rebuildable ingest buffers. It is not for financial ledgers or primary state unless the business contract allows loss.

Next, separate WAL from noisy data. A dedicated filesystem or device for WAL reduces the chance that a WAL fdatasync() waits behind bulk dirty writeback from data files, backups, log rotation, or container layers. This helps most when traces show WAL commits overlapping unrelated ext4/JBD2 or block flush work. It helps less when the device itself has poor flush latency.

Then smooth dirty writeback. Huge dirty-page waves create checkpoint cliffs. Tune database checkpoint size and pacing before touching filesystem safety. On Linux, review vm.dirty_background_bytes, vm.dirty_bytes, cgroup writeback behavior, and the database’s own checkpoint controls. The target is boring I/O: steady writeback and shorter queues, not impressive burst throughput.

Storage choice matters because fsync() ultimately trusts the device’s completion report. Enterprise SSDs with power-loss protection usually have much better and more honest flush behavior than consumer drives with volatile caches. RAID controllers, hypervisors, cloud block devices, and network storage can each add their own cache and flush semantics, so measure the actual stack the database uses.

Be explicit about unsafe shortcuts. mount -o barrier=0, data=writeback, PostgreSQL fsync=off, and SQLite synchronous=OFF can all reduce waits in selected tests. They are valid only when the data can be recreated, when the whole storage path has verified power-loss protection, or when the environment is disposable. They are not ext4 performance fixes for durable primary data.

The practical mental model is this: fsync() is a join point. ext4, JBD2, and the block layer decide how much earlier work must join before the database can continue. Fix the p99 by removing unrelated work from that join point, pacing the work that remains, and using storage that completes flushes predictably.

References

- Linux kernel ext4 JBD2 journal documentation

- Linux kernel ext4 admin guide for data modes, commit interval, and barriers

- Linux man-pages fsync(2) reference

- PostgreSQL write-ahead log and fsync configuration documentation

- SQLite PRAGMA synchronous documentation

- trace-cmd manual for Linux ftrace capture