The State of the Shell: Advanced Techniques and Modern Trends in Linux Scripting

In the ever-evolving world of Linux, from the latest Linux kernel news to developments in desktop environments like GNOME and KDE Plasma, the humble shell script remains a cornerstone of administration, automation, and development. Far from being a relic of the past, shell scripting is more relevant than ever, acting as the essential glue in modern DevOps, cloud infrastructure, and containerized workflows. While basic commands are a starting point, mastering intermediate and advanced techniques is what separates a novice from a seasoned Linux professional.

This article dives deep into the current landscape of Linux shell scripting news, exploring the modern tools, advanced practices, and security considerations that define effective scripting today. We’ll move beyond simple `echo` statements and `for` loops to uncover the techniques used to build robust, efficient, and secure automation pipelines. Whether you’re managing a fleet of servers running the latest from Red Hat news or customizing your personal desktop based on Arch Linux news, these insights will help you leverage the full power of the command line.

The Evolving Shell Landscape: Beyond Traditional Bash

For decades, Bash (Bourne-Again Shell) has been the de facto standard shell on most Linux distributions. However, the open-source community is always innovating, and recent trends show a significant shift towards more user-friendly and powerful interactive shells, while also enhancing Bash itself with modern tooling.

The Rise of Zsh and Fish

Recent Ubuntu news and even news from the macOS world have highlighted the shift towards Zsh (Z Shell) as a default for interactive use. Zsh offers a superset of Bash’s features, but its real power lies in its advanced tab-completion, improved globbing, and a massive plugin ecosystem, famously managed by frameworks like “Oh My Zsh”. These features provide a much richer interactive experience, making daily command-line work faster and less error-prone.

Similarly, the fish shell news continues to highlight its focus on user-friendliness “out of the box.” Fish features intuitive syntax, autosuggestions based on history, and brilliant, readable syntax highlighting without requiring complex configuration. While Bash remains the king for portable scripting (due to its ubiquity on servers), many developers and administrators now use Zsh or Fish for their daily interactive terminal sessions, improving productivity on everything from Fedora news workstations to custom Gentoo news builds.

# Traditional Bash loop to rename .txt to .md

for f in *.txt; do

mv -- "$f" "${f%.txt}.md"

done

# Fish offers a more concise, readable syntax for the same task

for f in *.txt

mv -- $f (string split . $f)[1].md

endModernizing Bash with Starship and Advanced Tools

Even if you stick with Bash, you don’t have to be left in the past. The latest Linux terminal news is filled with tools that can supercharge any shell. A prime example is Starship, a minimal, fast, and infinitely customizable cross-shell prompt. It can intelligently display context-aware information, such as the current Git branch, Kubernetes context, or Python virtual environment, providing critical information at a glance. This brings a modern, “smart” feel to even the most traditional Bash setup, reflecting a broader trend in enhancing developer experience across all platforms.

Advanced Scripting Techniques for Robust Automation

Writing a script that works is one thing; writing a script that is robust, maintainable, and fails gracefully is another. Modern automation demands a higher standard of quality, incorporating principles from software development into shell scripting.

Mastering Functions and Sourcing Libraries

For any script longer than a few dozen lines, modularity is key. Breaking down logic into functions makes your code cleaner, easier to debug, and reusable. A common best practice in Linux administration news is to create “library” files containing common functions (e.g., `logging.sh`, `utils.sh`). These libraries can then be `source`-d into your main scripts, preventing code duplication and promoting a consistent structure across your automation projects.

Advanced Error Handling with `set` and `trap`

By default, a Bash script will often continue running even after a command fails, which can lead to disastrous consequences, like deleting the wrong files or pushing a broken container image. To prevent this, modern scripts should begin with a “strict mode” declaration.

set -euo pipefail is a powerful combination:

-e: Exit immediately if a command exits with a non-zero status.-u: Treat unset variables as an error and exit immediately.-o pipefail: Causes a pipeline to return the exit status of the last command in the pipe that failed, rather than the exit status of the final command.

Furthermore, the trap command is an essential tool for ensuring cleanup actions occur, no matter how the script exits. You can use it to remove temporary files, close database connections, or log a final status message.

#!/bin/bash

set -euo pipefail

# Create a temporary directory for our work

# The -d flag ensures it's a directory. mktemp is crucial for security.

TEMP_DIR=$(mktemp -d)

# Define a cleanup function

cleanup() {

echo "Performing cleanup, removing ${TEMP_DIR}..."

rm -rf "${TEMP_DIR}"

}

# Set the trap: call the cleanup function on EXIT, ERR, or INT signals

trap cleanup EXIT ERR INT

echo "Created temporary directory: ${TEMP_DIR}"

cd "${TEMP_DIR}"

echo "Doing some work here..."

touch file1.txt

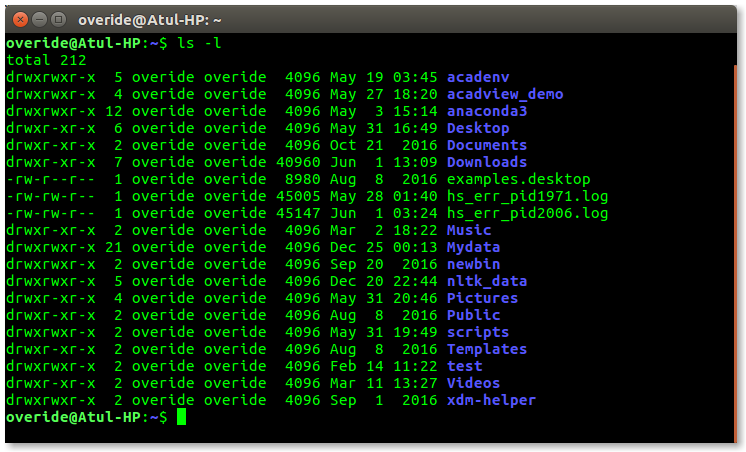

ls -l

# This command will fail, triggering the 'ERR' trap and then 'EXIT'

echo "Now, let's run a command that will fail."

ls /nonexistent-directory

# This line will never be reached because of 'set -e'

echo "Script finished successfully."Shell Scripting in the Modern DevOps and Cloud Ecosystem

The rise of containers and cloud-native technologies has created new opportunities and requirements for shell scripting. Scripts are no longer just for managing local files; they are now orchestrating complex deployments across distributed systems.

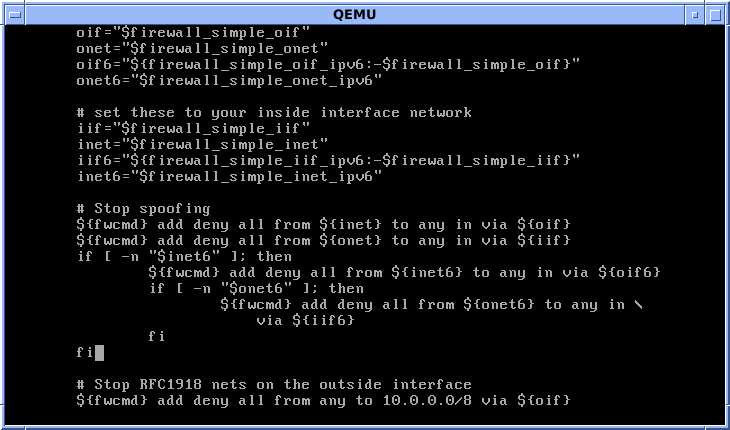

Scripting for Containers: Docker, Podman, and Kubernetes

Whether you’re following Docker Linux news or the latest Podman news, shell scripts are indispensable for automating container lifecycles. They are used to build images, tag them, push them to registries, and run them with the correct parameters. In the world of Kubernetes Linux news, shell scripts are often used as wrappers around `kubectl` or `helm` to automate deployments, run database migrations as Jobs, or perform health checks.

This is a core part of modern Linux CI/CD news, where tools like GitLab CI and GitHub Actions heavily rely on shell commands executed within containerized runners to build, test, and deploy applications. A simple script can define an entire deployment pipeline, making automation accessible and transparent.

Processing Structured Data with `jq` and `yq`

A significant trend in Linux DevOps news is the move away from parsing unstructured text with `grep`, `sed`, and `awk`. Modern APIs and configuration tools predominantly use structured data formats like JSON and YAML. To handle this, every modern scripter’s toolkit must include `jq` (for JSON) and `yq` (for YAML).

These command-line utilities are purpose-built to parse, filter, and transform structured data. Instead of relying on brittle regular expressions, you can use them to reliably extract a value from a complex API response or update a field in a YAML configuration file. Their importance cannot be overstated in a world driven by APIs and infrastructure-as-code.

#!/bin/bash

set -euo pipefail

# Example: Fetch Kubernetes pod information and extract names of running pods in the 'default' namespace

# Ensure kubectl and jq are installed

if ! command -v kubectl > /dev/null || ! command -v jq > /dev/null; then

echo "Error: This script requires 'kubectl' and 'jq' to be installed." >&2

exit 1

fi

echo "Fetching running pods in the 'default' namespace..."

# Use kubectl to get pods as JSON, then pipe to jq to process it.

# 1. '.items[]': Iterate over the items array in the JSON output.

# 2. 'select(.status.phase == "Running")': Filter for items where the status phase is "Running".

# 3. '.metadata.name': For each of those items, extract the pod's name.

# 4. The -r flag to jq outputs raw strings without quotes.

RUNNING_PODS=$(kubectl get pods -n default -o json | jq -r '.items[] | select(.status.phase == "Running") | .metadata.name')

if [[ -z "$RUNNING_PODS" ]]; then

echo "No running pods found in the 'default' namespace."

else

echo "Found running pods:"

echo "$RUNNING_PODS"

fiBest Practices for Secure and Performant Scripts

As scripts become more powerful and handle more critical tasks, adhering to best practices for security and performance is non-negotiable. A poorly written script can be a significant security vulnerability or a performance bottleneck.

Writing Secure Scripts

The latest Linux security news frequently covers vulnerabilities stemming from improper shell scripting. Key security practices include:

- Always Quote Variables: Unquoted variables (`$VAR`) are subject to word splitting and glob expansion, a common source of bugs and vulnerabilities. Always use double quotes (`”$VAR”`) unless you specifically need word splitting.

- Use `mktemp` for Temporary Files: Never create predictable temporary file names in `/tmp`. Use the `mktemp` utility to securely create temporary files and directories.

- Use Static Analysis with ShellCheck: ShellCheck is an indispensable linter for shell scripts. It can detect a huge range of common bugs, style issues, and security pitfalls before you even run your code. Integrating it into your editor or CI/CD pipeline is a modern best practice.

Performance Considerations

While shell scripts are incredibly versatile, they are not always the most performant solution. A common performance anti-pattern is calling external commands inside a loop. For text processing, a single `awk` or `sed` command is almost always orders of magnitude faster than a `while read` loop that calls other utilities on each line.

# --- BAD: Slow performance by calling external commands in a loop ---

# This calls the 'cut' command once for every line in the file.

while IFS=, read -r col1 col2 col3; do

echo "The second column is: $col2"

done < data.csv

# --- GOOD: Fast and efficient using a single awk process ---

# awk processes the entire file in one go, which is much faster.

awk -F',' '{print "The second column is: " $2}' data.csvKnowing when to switch from a shell script to a more powerful language like Python or Go is also important. For complex data structures, heavy computation, or long-running applications, a dedicated programming language is often the better choice. However, for orchestrating commands and automating system tasks, the shell remains the undisputed champion. This synergy is a key topic in Python Linux news and Go Linux news, where these languages are often used to build CLIs that are then orchestrated by shell scripts.

Conclusion: The Enduring Power of the Shell

The landscape of Linux is in constant flux, with new developments in everything from Linux filesystems news about Btrfs and ZFS to the latest advancements in Wayland news. Yet, amidst all this change, shell scripting has not only endured but has adapted and thrived. Its role has evolved from simple system administration to being a critical component of the modern, automated, cloud-native world.

The key takeaway from the current Linux open source news is that modern scripting is about more than just commands; it’s about embracing robust practices. By leveraging modern shells like Zsh, implementing strict error handling, using tools like `jq` and `ShellCheck`, and understanding the script’s role in a larger containerized ecosystem, you can write automation that is powerful, reliable, and secure. The next time you open a terminal, remember that you are wielding one of the most powerful and enduring tools in the Linux universe. Continue to explore, learn, and build with it.