The Silent Saboteur: A Deep Dive into Debugging Elusive Linux NFS Kernel Bugs

Introduction: The Hidden Complexity of Networked Filesystems

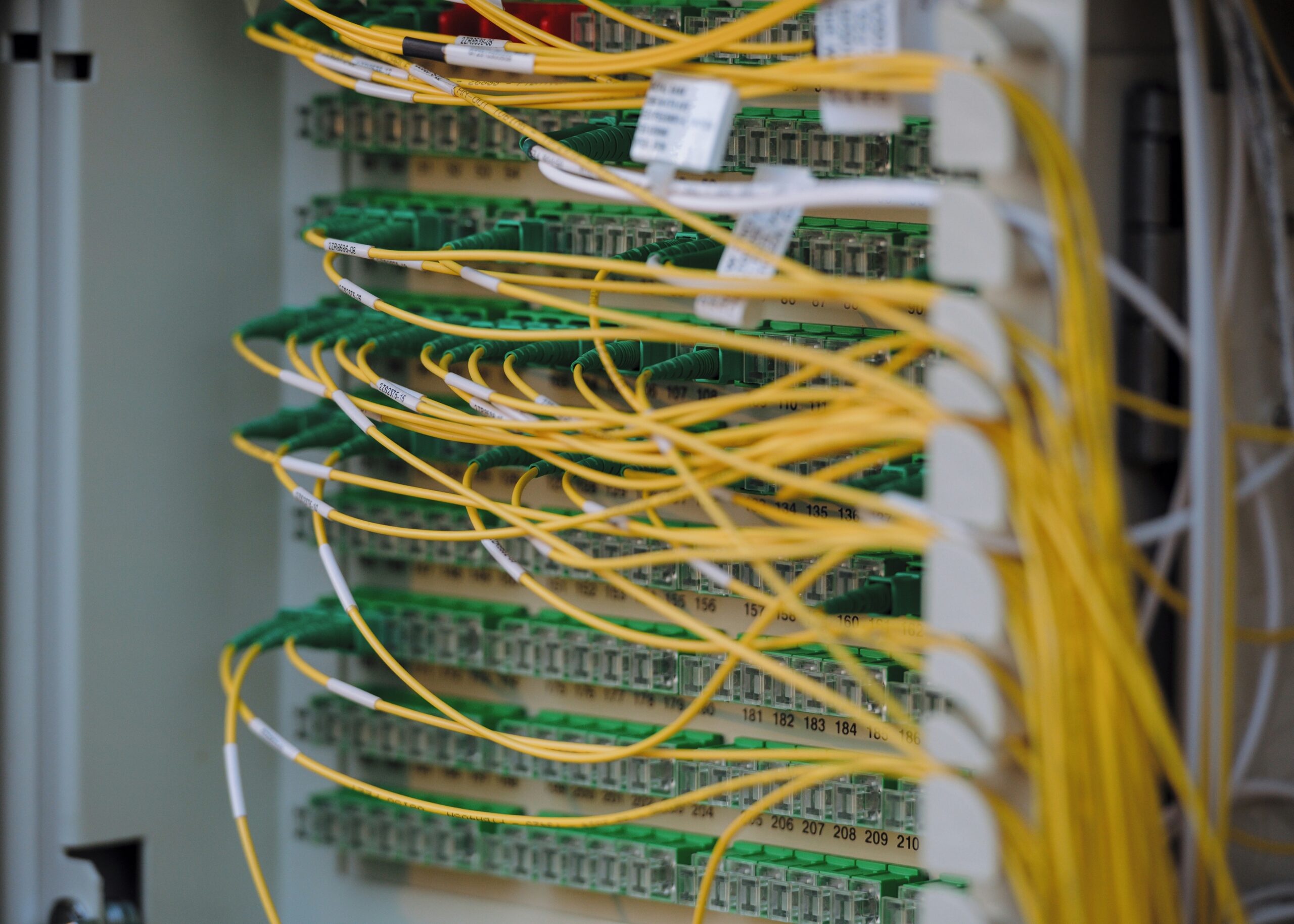

The Network File System (NFS) is a cornerstone of modern IT infrastructure, a distributed filesystem protocol that has served as the unsung hero in countless Linux environments for decades. From simple home labs running on a Raspberry Pi to massive enterprise clusters powering cloud services, NFS provides a robust and transparent way to share storage across a network. Its ubiquity across distributions like Ubuntu, Debian, Fedora, and enterprise giants like Red Hat Enterprise Linux (and its derivatives Rocky Linux and AlmaLinux) speaks to its stability and performance. However, beneath this veneer of simplicity lies a complex interplay of client-side caching, network RPC calls, and kernel-level logic. When things go wrong, they can go spectacularly wrong, leading to silent data corruption, inexplicable application hangs, and “stale file handle” errors that can bring production systems to a grinding halt. These elusive bugs, often lurking deep within the Linux kernel’s NFS implementation, are notoriously difficult to diagnose and can evade even the most seasoned system administrators. This article dives deep into the world of advanced NFS troubleshooting, providing a practical guide for hunting down these ghosts in the machine.

Understanding the Battlefield: Core NFS Concepts and Common Failure Points

Before we can debug a complex system, we must first understand its moving parts. The NFS protocol, particularly in its modern versions (NFSv4 and beyond), is a sophisticated stateful protocol that relies on several key mechanisms. Understanding these is critical to forming a hypothesis when troubleshooting. This is essential knowledge for anyone following Linux administration news and managing critical infrastructure.

Key NFS Mechanisms

- File Handles: Instead of file paths, NFS uses file handles—unique identifiers for files and directories on the server. A “stale file handle” error occurs when a client holds a handle for a file that has been deleted or changed on the server, often due to a breakdown in cache coherency.

- Attribute Caching: To reduce network traffic, NFS clients aggressively cache file attributes (like permissions, size, and modification times). While great for performance, this can lead to clients operating on outdated information if the cache isn’t properly invalidated. This is a frequent source of bugs reported in Linux kernel news.

- Locking: The Network Lock Manager (NLM) handles file locking to prevent data corruption when multiple clients access the same file. Race conditions and bugs in the NLM implementation can lead to deadlocks or applications freezing on I/O operations.

- RPC (Remote Procedure Call): NFS is built on top of the RPC mechanism. Every file operation (read, write, lookup) is an RPC call. Network latency, dropped packets, or server overload can cause these calls to time out, leading to either a “soft” error or an indefinite “hard” hang, depending on your mount options.

Provoking the Bug: Simulating High I/O Load

Many subtle NFS bugs only manifest under specific high-load conditions, such as rapid file creation, deletion, and attribute changes. You can simulate this kind of workload with a simple script to try and reproduce an issue in a controlled environment. This technique is invaluable for developers and SREs working with Linux development news and tools.

import os

import time

import random

import string

from multiprocessing import Pool

# Define the target NFS mount point

NFS_MOUNT_POINT = "/mnt/nfs_share/test_dir"

def generate_random_filename(length=12):

"""Generates a random string for filenames."""

letters = string.ascii_lowercase

return ''.join(random.choice(letters) for i in range(length))

def stress_worker(worker_id):

"""A single worker process to perform rapid file operations."""

print(f"Starting worker {worker_id}")

if not os.path.exists(NFS_MOUNT_POINT):

try:

os.makedirs(NFS_MOUNT_POINT)

except FileExistsError:

pass # Another worker might have created it

for i in range(500): # Number of operations per worker

filename = generate_random_filename()

filepath = os.path.join(NFS_MOUNT_POINT, filename)

try:

# 1. Create and write to the file

with open(filepath, "w") as f:

f.write(f"stress test data from worker {worker_id}, iteration {i}")

# 2. Read the file attributes

os.stat(filepath)

# 3. Rename the file

new_filepath = f"{filepath}_renamed"

os.rename(filepath, new_filepath)

# 4. Delete the file

os.remove(new_filepath)

except Exception as e:

print(f"Worker {worker_id} encountered an error: {e}")

# In a real scenario, log this error extensively

# Small random delay to avoid perfect synchronization

time.sleep(random.uniform(0.01, 0.05))

print(f"Worker {worker_id} finished.")

if __name__ == "__main__":

num_processes = 16 # Adjust based on your client's core count

print(f"Starting NFS stress test with {num_processes} processes...")

with Pool(processes=num_processes) as pool:

pool.map(stress_worker, range(num_processes))

print("NFS stress test complete.")Running this Python script with multiple processes can create the kind of chaotic, high-concurrency environment where race conditions in the NFS client or server code are more likely to surface. This is a practical application of Python Linux news for system diagnostics.

The Investigator’s Toolkit: Diagnosing NFS Problems in the Wild

When an NFS issue strikes, you need a systematic approach to diagnosis. This involves collecting data from the client, server, and the network itself. This is a core skill for anyone in Linux DevOps news and site reliability engineering.

Client-Side Diagnostics

Your first stop should always be the client machine experiencing the issue. The nfs-utils package, available in all major distributions from Arch Linux to SUSE Linux, provides essential tools.

- dmesg and journalctl: Check the kernel ring buffer and systemd journal for NFS-related errors. Look for keywords like “NFS,” “stale,” “timeout,” or “RPC.”

# Check for recent NFS errors in the systemd journal journalctl -k -p err | grep -i "nfs" # Follow the kernel log in real-time dmesg -wH - nfsstat and mountstats: These commands provide a wealth of information about NFS client activity.

nfsstat -cshows RPC call statistics, whilemountstatsgives per-mount-point details, including average RTT (Round Trip Time) for RPC calls, which is invaluable for spotting network latency. High retransmission (retrans) counts innfsstatare a clear sign of network problems or an overloaded server.

Network-Level Analysis

Often, the problem lies not with the client or server software but with the network in between. Firewalls, switches, or even faulty cables can drop packets and cause NFS timeouts. Tools like tcpdump and Wireshark are indispensable for capturing and analyzing network traffic. For those following Linux networking news, mastering these tools is non-negotiable.

# Capture NFS traffic between a client (192.168.1.100) and server (192.168.1.50)

# -i eth0: Specify the network interface

# -s 0: Capture full packets

# -w nfs_capture.pcap: Write the capture to a file for analysis in Wireshark

# port 2049: The standard NFS port

sudo tcpdump -i eth0 -s 0 -w nfs_capture.pcap host 192.168.1.100 and host 192.168.1.50 and port 2049In the packet capture, you can look for long delays between an RPC request from the client and the reply from the server. You can also see TCP retransmissions or other network-level errors that wouldn’t be visible from the client or server logs alone. This level of detail is crucial for distinguishing between a software bug and an infrastructure problem, a key topic in Linux server news.

Advanced Triage: Peering into the Kernel

When system logs and network captures don’t reveal the root cause, the problem may lie deep within the Linux kernel itself. This is where advanced tools are required to trace kernel function calls and understand the NFS client’s internal state. This is the domain of kernel developers and performance engineers, often highlighted in Linux performance news.

Using ftrace for Live Kernel Tracing

ftrace is a powerful, built-in kernel tracer that can monitor what’s happening inside the kernel without recompiling it or loading external modules. The trace-cmd utility provides a user-friendly interface to it. For example, if you suspect an issue with directory entry caching (a common source of stale file handles), you can trace the nfs_d_revalidate function, which is responsible for checking if a cached directory entry is still valid.

# Install trace-cmd (e.g., sudo apt install trace-cmd on Debian/Ubuntu)

# Start tracing specific NFS functions

sudo trace-cmd record -p function_graph -g nfs_d_revalidate -g nfs_lookup

# ... now, trigger the problematic operation on your NFS mount ...

# For example: ls -l /mnt/nfs_share/problematic_dir

# Stop tracing

sudo trace-cmd stop

# View the report

trace-cmd reportThe report will show you a call graph of the traced functions, including their execution times. This can help you spot functions that are taking an unusually long time to complete or are being called in an unexpected sequence, providing vital clues to the bug’s location. This is a powerful technique for anyone involved with Linux troubleshooting.

Kernel Version Bisection

If you suspect a regression—a bug introduced in a newer kernel version—the most definitive way to find it is to perform a `git bisect` on the Linux kernel source tree. This involves systematically testing different kernel versions to narrow down the exact commit that introduced the problem. While time-consuming, this process is the gold standard for reporting bugs to the Linux kernel mailing list (LKML) and is a major topic in Linux open source news.

Fortifying Your Defenses: Best Practices and Mitigation

While you can’t prevent every kernel bug, you can configure your systems to be more resilient and easier to debug. Adhering to best practices is key to maintaining a stable environment, whether you’re using Pop!_OS on the desktop or AlmaLinux on a server.

Tuning Mount Options

The options you use in /etc/fstab have a significant impact on NFS performance and reliability.

hardvs.soft: Almost always usehard. Asoftmount will time out and return an error to the application, which can lead to silent data corruption if the application isn’t prepared to handle it. Ahardmount will retry indefinitely, ensuring data integrity at the cost of potentially hanging the application until the server is available.syncvs.async: The default isasync, which provides better performance by allowing the server to acknowledge writes before they are committed to stable storage. Usesynconly if you have extreme data integrity requirements and can tolerate the performance hit.rsizeandwsize: These control the size of read/write data chunks. Modern networks can typically handle larger values (e.g., 1048576), which can improve throughput. However, some older network gear or buggy drivers might perform better with smaller values. Tuning these can be a part of performance optimization.

Proactive Monitoring

Don’t wait for users to report a problem. Use a modern monitoring stack like Prometheus and Grafana to track key NFS metrics. The Node Exporter for Prometheus exposes detailed NFS statistics that can be used to build dashboards and alerts. This is a core tenet of modern Linux observability news.

# PromQL query to calculate the 95th percentile of NFS RPC latency (RTT) in seconds

# This can help you detect network degradation or server overload before it becomes critical.

histogram_quantile(0.95, sum(rate(node_nfs_rpc_rtt_seconds_bucket[5m])) by (le))By alerting on abnormal increases in latency, RPC retransmissions, or server-side errors, you can investigate issues before they impact your applications. This proactive approach is a cornerstone of effective Linux SRE and DevOps practices.

Conclusion: A Continuous Journey

The world of Linux NFS is a testament to the power and complexity of distributed systems. While incredibly stable for the vast majority of use cases, its position deep within the kernel means that when bugs do appear, they are often subtle, difficult to reproduce, and can have a massive blast radius. Hunting these bugs requires a multi-layered approach, combining application-level stress testing, client- and server-side log analysis, deep network inspection, and even direct kernel tracing.

The key takeaways for any administrator or engineer are clear: understand the fundamentals of the protocol, master your diagnostic toolkit, implement proactive monitoring, and never underestimate the importance of meticulous configuration. By embracing these principles, you can transform a potential crisis into a manageable investigation, ensuring your infrastructure remains robust, reliable, and performant. The ongoing evolution in Linux kernel news means that while new challenges will arise, so too will new tools and techniques to conquer them.