Beyond Automation: Why the Lost Art of Linux Administration is More Critical Than Ever

In the modern IT landscape, a seismic shift has occurred. The conversation, once dominated by meticulous server builds and manual configuration, is now centered around Infrastructure as Code (IaC), container orchestration, and serverless paradigms. Tools like Kubernetes, Ansible, and Terraform, combined with the power of cloud platforms like AWS, Azure, and Google Cloud, have created powerful layers of abstraction. This has led many to believe that the traditional craft of Linux server administration—the art of understanding a system from the kernel up—is becoming obsolete. The latest Linux DevOps news seems to confirm this, focusing on high-level orchestration rather than low-level tuning.

However, this perspective is a dangerous oversimplification. Abstractions are powerful, but they are also leaky. When a Kubernetes pod is stuck in a `CrashLoopBackOff`, when an Ansible playbook fails with a cryptic error, or when network performance mysteriously degrades, the solution is rarely found in the abstraction layer itself. The answer lies beneath, in the fundamental workings of the Linux operating system. Reclaiming this “lost art” is not an act of nostalgia; it is a critical necessity for building robust, secure, and truly resilient systems. True mastery in the age of automation is not about replacing foundational knowledge but augmenting it.

The Unshakeable Foundation: Mastering the Command Line

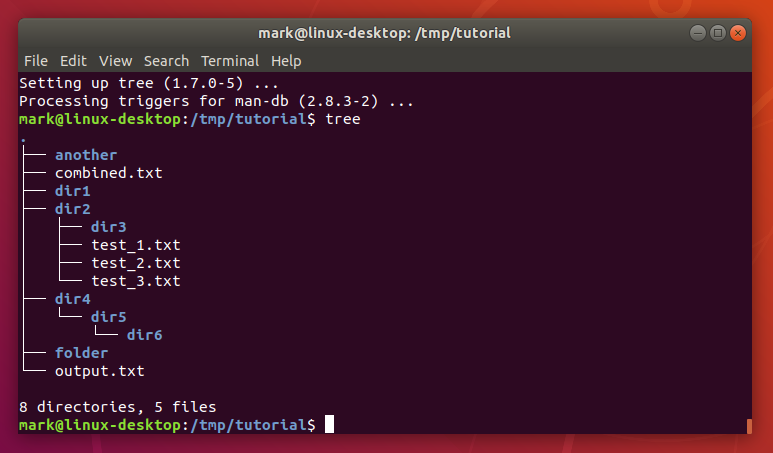

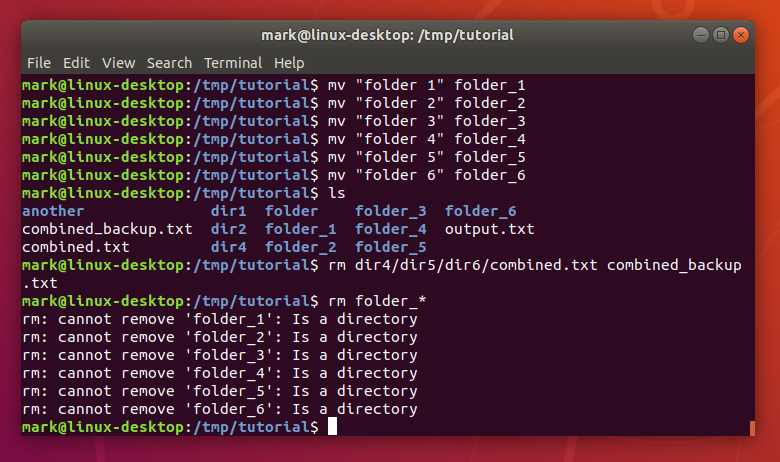

At the heart of all Linux administration lies the command-line interface (CLI). While modern graphical tools and web UIs offer convenience, they can never replace the power, precision, and composability of the shell. Whether you’re using bash, zsh, or fish, the terminal is the ultimate ground truth for your system. The latest Linux terminal news often highlights new tools and shell enhancements, but the core philosophy remains unchanged: small, single-purpose utilities that can be chained together to perform incredibly complex tasks.

This is the “Unix philosophy” in action. Mastering tools like grep, sed, awk, find, and xargs is not about memorizing arcane flags. It’s about learning to think in pipelines—to see a problem as a flow of data that can be filtered, transformed, and acted upon step-by-step. This skill is language-agnostic and timeless. It’s the difference between aimlessly clicking through log files in a GUI and constructing a one-liner that pinpoints the exact source of an error in seconds.

Practical Example: Real-Time Log Analysis

Imagine you need to monitor your SSH logs for failed root login attempts and identify the offending IP addresses in real-time. A skilled administrator can craft a command pipeline to do just that, providing immediate insight into a potential brute-force attack. This is a common task discussed in Linux security news and is a fundamental skill for any sysadmin.

#!/bin/bash

# A script to monitor failed SSH login attempts for the 'root' user in real-time.

# Use journalctl for modern systemd-based systems like recent Ubuntu, Debian, and Fedora.

# The -f flag follows the log, -n 10 shows the last 10 entries to start.

# The -u sshd specifies the SSH service unit.

journalctl -f -n 10 -u sshd | grep --line-buffered 'Failed password for root' | awk '{print $(NF-3)}' | uniq -c | sort -nrThis single line of code follows the system journal for the SSH daemon, filters for the specific error message, uses awk to extract the IP address (the fourth field from the end), and then counts and sorts the unique IPs to show you the most frequent offenders. This is the essence of the craft: turning a complex requirement into a simple, elegant solution.

Peering Under the Hood: System Internals and Troubleshooting

A server is not a black box. To effectively manage it, you must understand its core components and processes. This begins with the Linux boot process, from the moment GRUB or systemd-boot loads the kernel to the point where systemd takes over and starts user-space services. Understanding this sequence is crucial for diagnosing boot-time failures, a topic often covered in news for distributions like Arch Linux news or Gentoo news, where users have more control over the init system.

In the modern era, systemd has become the de facto init system and service manager. While controversial, its toolset is undeniably powerful. The latest systemd news often revolves around new features that streamline service management. Learning to write your own service units, set up Linux systemd timers as a modern replacement for cron, and, most importantly, expertly navigate the system’s logs with journalctl are non-negotiable skills. When a service fails to start, journalctl -u <service-name> -b is your first and best tool for discovery.

Beyond services, a deep understanding of Linux performance news and monitoring is key. Tools like top and htop provide a snapshot, but true analysis requires knowing where to look next. Is the CPU bottlenecked by I/O wait? Use iostat. Is the system swapping heavily? Use vmstat and free to investigate Linux memory management. Is a specific process consuming too many file descriptors? Check /proc/<pid>/limits. This level of forensic troubleshooting is impossible without a solid mental model of how the Linux kernel news and its subsystems operate.

Practical Example: Diagnosing a Failing Service with journalctl

Let’s say your Nginx web server is failing to start after a configuration change. Instead of guessing, you can use journalctl to get a precise error message. This is a daily reality for anyone following Nginx Linux news or managing web servers.

# First, check the status to see the high-level error

systemctl status nginx.service

# If the error is generic, dive into the logs for the specific service unit.

# The '-e' flag jumps to the end of the log.

# The '-b' flag shows logs only from the current boot.

journalctl -u nginx.service -b -e

# Example Output might show:

# nginx[1234]: nginx: [emerg] "server_name" directive is not allowed here in /etc/nginx/sites-enabled/default:25

# This tells you the exact file and line number of the configuration error.The Modern Alchemist: Blending Traditional Skills with New Tools

The true power of a modern administrator lies not in choosing between old and new but in blending them. The rise of Linux containers news, driven by tools like Docker and Podman, is a perfect example. A container is not magic; it is a combination of Linux kernel features: namespaces (for isolation) and cgroups (for resource limiting). An admin who understands these concepts can debug container networking issues far more effectively because they see the underlying veth pairs and network bridges, not just an abstract Docker network. They understand how filesystem layers work, making them better at optimizing image sizes.

Similarly, Linux configuration management tools like Ansible, Puppet, and SaltStack are immensely powerful, but they are only as good as the instructions they are given. An admin with deep knowledge of Linux security news and best practices can write an Ansible playbook that doesn’t just install a package but also configures SELinux or AppArmor policies, sets up a secure firewall with nftables, and hardens the SSH daemon configuration according to industry standards. The tool automates the “how,” but the administrator’s knowledge defines the “what” and “why.” This synergy is central to the latest Linux DevOps news.

Practical Example: Hardening SSH with Ansible

Here is a simple Ansible task that applies several SSH security best practices. An administrator knows *why* each of these settings is important, from disabling root login to using modern cryptographic protocols. This knowledge transforms a simple automation script into a robust security control.

---

- name: Harden SSH Server Configuration

hosts: all

become: yes

tasks:

- name: Update sshd_config with secure settings

lineinfile:

path: /etc/ssh/sshd_config

regexp: '^{{ item.key }}'

line: '{{ item.key }} {{ item.value }}'

state: present

validate: 'sshd -t -f %s'

loop:

- { key: 'PermitRootLogin', value: 'no' }

- { key: 'PasswordAuthentication', value: 'no' }

- { key: 'PubkeyAuthentication', value: 'yes' }

- { key: 'ChallengeResponseAuthentication', value: 'no' }

- { key: 'UsePAM', value: 'yes' }

- { key: 'X11Forwarding', value: 'no' }

- { key: 'PrintMotd', value: 'no' }

- { key: 'AcceptEnv', value: 'LANG LC_*' }

- { key: 'Subsystem', value: 'sftp /usr/lib/openssh/sftp-server' }

- { key: 'Protocol', value: '2' }

notify: Restart SSH

handlers:

- name: Restart SSH

service:

name: sshd

state: restartedCultivating the Craft: Security, Resilience, and Continuous Learning

The art of administration is a continuous practice. It involves building systems that are not just functional but also secure and resilient. This means staying current with Linux security news and understanding how to implement robust defenses. This goes beyond a simple firewall; it involves configuring Mandatory Access Control (MAC) systems like SELinux (prevalent in the Red Hat news and Fedora news ecosystems) or AppArmor (common in Ubuntu news and Debian news). These systems provide a deeper layer of defense by confining processes to only the resources they absolutely need.

Resilience is built from the filesystem up. A seasoned admin understands the trade-offs between ext4, Btrfs, and ZFS. They know how to leverage Linux LVM (Logical Volume Management) to create flexible storage that can be resized on the fly and how to implement robust Linux backup news strategies using tools like rsync, BorgBackup, or Restic. They understand how to set up secure networking with modern VPNs like WireGuard, a frequent topic in Linux networking news.

Practical Example: Basic System Security Audit Script

An administrator should be able to quickly audit a system for common security misconfigurations. This simple script checks for a few key indicators, such as world-writable files and listening network ports, which could represent potential vulnerabilities.

#!/bin/bash

# A simple script to perform a basic security audit.

echo "### Checking for world-writable files in /etc and /var ###"

find /etc /var -type f -perm -0002 -ls

echo ""

echo "### Checking for files without an owner ###"

find / -nouser -o -nogroup -print

echo ""

echo "### Listing all listening TCP/UDP ports ###"

# Use 'ss' as it has replaced the older 'netstat' tool.

ss -tuln

echo ""

echo "### Checking for SUID/SGID files (potential privilege escalation) ###"

find / -type f \( -perm -4000 -o -perm -2000 \) -exec ls -ldb {} \; 2>/dev/null

echo "### Audit Complete ###"Conclusion: The Foundation for the Future

The landscape of Linux administration is undeniably evolving. The rise of automation, containers, and cloud computing is not a threat to the skilled administrator but an opportunity. These new technologies have not rendered fundamental knowledge obsolete; they have made it more valuable than ever. The “lost art” of Linux administration is the bedrock upon which all modern infrastructure stands.

When the abstractions fail, it is the administrator who understands the Linux kernel news, who can read dmesg output, who can dissect a network packet, and who can write a complex shell pipeline who will solve the problem. The future of IT does not belong to those who can only operate the high-level tools, but to those who also understand the deep, intricate machinery beneath. The call to action is clear: embrace the new tools, but never stop honing the timeless craft of understanding the system from the ground up. That is the true path to becoming an indispensable engineer in any technology stack.