The Future of Linux Kernel Development: A Deep Dive into Rust-Based Kernel Modules

The Dawn of a Safer Era for the Linux Kernel

For decades, the C programming language has been the undisputed king of systems programming and the bedrock of the Linux kernel. Its low-level control and performance are legendary, but so are its pitfalls, particularly concerning memory safety. A single null pointer dereference or buffer overflow in kernel space can lead to a system-wide crash or, worse, a critical security vulnerability. This long-standing challenge is now being met by a powerful new contender: Rust. The integration of Rust as a second official language for kernel development marks one of the most significant shifts in the history of the project. This is not just a niche experiment; it’s a paradigm shift aimed at building a more secure and robust kernel for the future.

This wave of Linux kernel news is set to impact every major distribution, from enterprise giants like Red Hat Enterprise Linux and SUSE Linux to community favorites like Debian, Ubuntu, and Fedora. The promise of Rust is its ability to eliminate entire classes of memory-related bugs at compile time, thanks to its strict ownership and borrowing rules. In this article, we will take a comprehensive technical dive into the world of Rust-based Linux kernel modules. We’ll explore the core concepts, walk through practical examples of building modules, discuss advanced interoperability techniques, and outline the best practices that are shaping this exciting new frontier in Linux development news.

Core Concepts: Why Rust and How to Get Started

The decision to introduce a new language into the Linux kernel was not taken lightly. It was driven by the tangible benefits Rust offers in a domain where stability and security are paramount. Understanding these foundational concepts is key to appreciating this monumental change in Linux programming news.

The Rust Advantage: Compile-Time Safety

The primary motivation for using Rust in the kernel is memory safety without sacrificing performance. In C, developers are manually responsible for memory management, a complex and error-prone task. Common bugs include:

- Dangling Pointers: Accessing memory that has already been freed.

- Buffer Overflows: Writing past the allocated boundary of a buffer, potentially overwriting adjacent memory.

- Null Pointer Dereferencing: Attempting to access memory via a null pointer, leading to a kernel panic.

Rust’s compiler, through its “borrow checker,” enforces a set of rules that prevent these issues from ever making it into the compiled code. The concepts of ownership (each value has a single owner), borrowing (references can “borrow” access to a value), and lifetimes (scopes for which references are valid) ensure that memory is accessed safely. This static analysis means a whole category of critical vulnerabilities can be prevented before the code is ever run, a massive leap forward for Linux security news.

Setting Up Your Development Environment

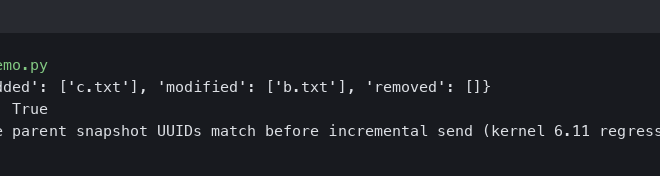

To begin writing Rust kernel modules, your system needs to be properly configured. This is still an evolving area, so using a recent kernel is essential. First, ensure your kernel is compiled with Rust support enabled. You can check this in your kernel’s .config file:

# In your kernel source directory

grep CONFIG_RUST .configYou should see CONFIG_RUST=y. If not, you’ll need to enable it via make menuconfig (under “General setup” —> “Rust support”) and recompile your kernel. Additionally, you’ll need the Rust toolchain, clang, and bindgen.

With the environment ready, let’s create a minimal “Hello, World!” kernel module. This simple example demonstrates the basic structure and necessary components.

#

![no_std]

#![feature(alloc_error_handler)

]

use kernel::prelude::*;

module! {

type: HelloWorld,

name: b"hello_rust",

author: b"Your Name",

description: b"A simple Rust kernel module",

license: b"GPL",

}

struct HelloWorld;

impl KernelModule for HelloWorld {

fn init(_module: &'static ThisModule) -> Result<Self> {

pr_info!("Hello, world! Rust is in the kernel!\n");

Ok(HelloWorld)

}

}

impl Drop for HelloWorld {

fn drop(&mut self) {

pr_info!("Goodbye, world! The Rust module is being unloaded.\n");

}

}This code uses the kernel crate, the official abstraction layer for Rust-in-Linux. The module! macro handles the boilerplate for module registration. The KernelModule trait requires an init function, which is our module’s entry point. The Drop trait provides a safe, idiomatic way to handle cleanup, equivalent to the C module’s exit function.

Building a Practical Module: A Character Device in Rust

While “Hello, World!” is a great start, a more practical example helps illustrate how Rust interacts with core kernel subsystems. Let’s build a simple character device. A character device is a common type of driver that presents a file-like interface in /dev, allowing user-space programs to interact with it. This is a fundamental concept in Linux device drivers news.

The Module Structure and Makefile

To build our module, we need a Makefile that integrates with the kernel’s build system (Kbuild). This file tells Kbuild how to compile our Rust source code and link it into a kernel module file (.ko).

obj-m := rust_chardev.o

rust_chardev-y := main.o

# Point to your kernel source tree

KDIR ?= /lib/modules/$(shell uname -r)/build

all:

make -C $(KDIR) M=$(PWD) modules

clean:

make -C $(KDIR) M=$(PWD) cleanThis Makefile defines our module object and points to the kernel source directory. Running make will invoke the kernel’s build system to compile our main.rs file.

Implementing the Character Device

Our character device will be very simple: when a user reads from it, it will return a fixed string. This requires us to define file operations (read, write, open, etc.) and register the device with the kernel’s Virtual File System (VFS).

The kernel crate provides safe abstractions for these operations. We’ll create a struct to hold our device’s state and implement the FileOperations trait for it.

#

![no_std]

#![feature(alloc_error_handler)

]

use kernel::prelude::*;

use kernel::{chrdev, file, io_buffer, str};

module! {

type: RustCharDev,

name: b"rust_chardev",

author: b"Your Name",

description: b"A simple Rust character device",

license: b"GPL",

}

// Define the file operations for our character device.

struct CharDevOps;

impl file::Operations for CharDevOps {

// The `open` operation is called when the device file is opened.

fn open(_shared: &(), _file: &file::File) -> Result<Box<Self>> {

pr_info!("rust_chardev: Device opened.\n");

Ok(Box::try_new(CharDevOps)?)

}

// The `read` operation is called when a process reads from the device file.

fn read(

_this: &mut Self,

_file: &file::File,

buf: &mut impl io_buffer::IoBufferWriter,

_offset: u64,

) -> Result<usize> {

let message = b"Hello from a Rust character device!\n";

// Ensure we don't write past the user's buffer.

let len = core::cmp::min(message.len(), buf.len());

buf.write_slice(&message[..len])?;

pr_info!("rust_chardev: Read {} bytes.\n", len);

Ok(len)

}

}

struct RustCharDev {

_cdev: chrdev::Registration<1>,

}

impl KernelModule for RustCharDev {

fn init(_module: &'static ThisModule) -> Result<Self> {

pr_info!("Initializing Rust character device.\n");

// Register the character device with the kernel.

let cdev = chrdev::Registration::new_pinned(b"rust_chardev\0", 0, 1, CharDevOps)?;

pr_info!(

"Character device registered with major number {}.\n",

cdev.major()

);

pr_info!("Try: 'sudo mknod /dev/rust_chardev c {} 0'\n", cdev.major());

pr_info!("And then: 'cat /dev/rust_chardev'\n");

Ok(RustCharDev { _cdev: cdev })

}

}

impl Drop for RustCharDev {

fn drop(&mut self) {

pr_info!("Cleaning up Rust character device.\n");

}

}After compiling and loading this module with insmod rust_chardev.ko, you can create the device node and read from it. The chrdev::Registration type is a perfect example of Rust’s safety principles. It uses the RAII (Resource Acquisition Is Initialization) pattern; the device is automatically unregistered when the RustCharDev struct goes out of scope (during module unload), preventing resource leaks.

Advanced Techniques: Interoperability and Safe Abstractions

While new drivers can be written in pure Rust, the reality is that they will need to interact with the vast existing C codebase of the kernel. This Foreign Function Interface (FFI) is where Rust’s unsafe keyword comes into play, and it must be handled with extreme care.

Working with Unsafe Code and C APIs

The unsafe block in Rust tells the compiler, “I know what I’m doing, and I guarantee this code is safe.” It’s necessary for two main reasons in kernel development: dereferencing raw pointers and calling C functions. The core philosophy of the Rust-for-Linux project is not to eliminate unsafe entirely but to encapsulate it within higher-level, safe abstractions.

For example, a kernel subsystem might expose a C function that takes a raw pointer. The Rust code would call this function inside an unsafe block but wrap it in a safe Rust function that validates inputs and handles return values gracefully, exposing a safe API to the rest of the Rust module.

Building Safe Wrappers: The Spinlock Example

A classic example of a necessary but dangerous kernel primitive is the spinlock. Misusing a spinlock in C can easily lead to deadlocks or race conditions. In Rust, we can create a safe wrapper that uses RAII to ensure the lock is always released.

The kernel crate already provides safe wrappers like SpinLock, but let’s imagine how one might be built to understand the principle. The goal is to create a guard object that holds the lock and automatically releases it when the guard goes out of scope.

use kernel::sync::SpinLock;

use kernel::prelude::*;

// A struct that protects data with a spinlock.

struct SharedData {

// The kernel crate's SpinLock provides a safe wrapper.

lock: SpinLock<u32>,

}

fn demonstrate_locking() -> Result<()> {

let shared_data = SharedData {

lock: SpinLock::new(0),

};

// The `lock()` method returns a guard.

// The lock is acquired here.

let mut data_guard = shared_data.lock.lock();

// We can now safely access and modify the data.

*data_guard += 1;

pr_info!("Data is now: {}", *data_guard);

// When `data_guard` goes out of scope at the end of this function,

// its `Drop` implementation is called, which automatically releases the spinlock.

// This prevents accidental deadlocks from forgetting to unlock.

Ok(())

}This pattern is central to writing safe and idiomatic Rust in the kernel. By building these small, verifiable, safe abstractions around unsafe C APIs, developers can construct complex drivers and subsystems with a much higher degree of confidence. This is a game-changer for Linux administration news, as it promises a future with more stable and secure drivers.

Best Practices and the Road Ahead

As Rust’s role in the kernel evolves, a set of best practices is emerging. Following these guidelines is crucial for writing clean, safe, and maintainable kernel code.

Guidelines for Rust Kernel Development

- Minimize

unsafe: Keepunsafeblocks as small and localized as possible. Always add a comment explaining why the block is necessary and how its safety is guaranteed. - Leverage Abstractions: Prefer using the safe abstractions provided by the

kernelcrate over calling C functions directly. - Embrace the Type System: Use Rust’s powerful type system to represent state. For example, use

Option<T>for pointers that can be null andResult<T, E>for fallible operations. - Use Clippy: The Rust linter, Clippy, is an invaluable tool for catching common mistakes and improving code quality.

The Future Outlook

The integration of Rust is a long-term project. While you won’t see the entire kernel rewritten overnight, new drivers and subsystems are already being developed in Rust. The Asahi Linux project, for example, is using Rust to write a GPU driver for Apple Silicon, a monumental task that showcases the language’s capabilities. This progress is exciting Linux hardware news, as it could accelerate support for new and complex devices.

As this effort matures, users of all major distributions, including Arch Linux, Manjaro, Pop!_OS, and even specialized ones like Kali Linux, will benefit from the increased security and stability. The foundational work being done today is paving the way for a more resilient Linux ecosystem for years to come.

Conclusion: A New Chapter for Linux

The introduction of Rust into the Linux kernel is more than just a technical curiosity; it represents a fundamental investment in the long-term health and security of the entire open-source ecosystem. By providing a safer alternative to C for new kernel code, the project is proactively addressing the root cause of many bugs and vulnerabilities. We’ve seen how Rust’s core principles of ownership and borrowing, combined with a philosophy of building safe abstractions, can be applied to write robust kernel modules.

While the journey is still in its early stages, the momentum is undeniable. For developers, system administrators, and Linux enthusiasts, this is a space to watch closely. The skills and patterns emerging from the Rust-for-Linux project are shaping the future of systems programming. The next generation of drivers, filesystems, and core kernel components will be built on this safer foundation, ensuring that Linux remains at the forefront of innovation, performance, and security.