Ext4 Supercharges Performance: A Deep Dive into Parallel Direct I/O Enhancements in Linux

The Linux kernel is a marvel of continuous evolution, with each release bringing a host of improvements, security fixes, and performance enhancements. While some changes grab headlines, others are subtle yet profound, targeting specific bottlenecks that unlock massive potential for demanding workloads. One such groundbreaking development has recently landed in the ext4 filesystem, the default for a vast number of Linux distributions. A significant optimization now dramatically accelerates parallel direct I/O (DIO) overwrite operations, transforming performance in critical applications like databases, virtualization, and high-performance computing. This article delves into the technical details of this enhancement, exploring the problem it solves, how it works, and what it means for the broader Linux ecosystem.

Understanding the I/O Landscape: Buffered vs. Direct I/O

To appreciate the magnitude of this ext4 improvement, it’s essential to first understand the two primary modes of file I/O in Linux: buffered I/O and direct I/O. This distinction is a cornerstone of Linux performance tuning and a key piece of modern Linux administration news.

Buffered I/O: The Default Path

By default, all file operations in Linux go through the page cache, a sophisticated in-memory cache. When an application writes data, the kernel first copies it to the page cache and immediately reports the write as complete to the application. The actual write to the physical storage device happens later, managed by the kernel’s I/O scheduler. This approach offers several advantages:

- Speed: Writing to RAM is orders of magnitude faster than writing to disk, making applications feel highly responsive.

- Efficiency: The kernel can coalesce multiple small writes into larger, more efficient ones, reducing disk fragmentation and overhead.

- Read Caching: Data read from disk is also stored in the page cache, making subsequent reads of the same data instantaneous.

However, for applications that manage their own caching, like many database systems (PostgreSQL, MariaDB), the kernel’s page cache can be redundant, leading to “double caching” and consuming valuable memory.

Direct I/O: Bypassing the Cache for Performance

Direct I/O, enabled by the O_DIRECT flag when opening a file, provides a path for data to be transferred directly between the application’s buffer and the storage device, completely bypassing the kernel’s page cache. This is crucial for applications that:

- Implement their own intelligent caching mechanisms.

- Require guaranteed data persistence on disk after a write operation completes.

- Handle very large data streams where caching offers little benefit.

This is common in the world of Linux databases news and Linux virtualization news, where platforms like KVM and QEMU use direct I/O for virtual disk images. Here is a simple C code example demonstrating how to open a file for direct I/O.

#define _GNU_SOURCE

#include <stdio.h>

#include <stdlib.h>

#include <unistd.h>

#include <fcntl.h>

#include <string.h>

#define BLOCK_SIZE 4096

int main() {

const char *filename = "direct_io_test.dat";

int fd;

char *buffer;

ssize_t bytes_written;

// Open the file with the O_DIRECT flag

// O_WRONLY: Write-only

// O_CREAT: Create the file if it doesn't exist

fd = open(filename, O_WRONLY | O_CREAT | O_DIRECT, 0644);

if (fd == -1) {

perror("open");

return 1;

}

// Direct I/O requires memory-aligned buffers

if (posix_memalign((void **)&buffer, BLOCK_SIZE, BLOCK_SIZE) != 0) {

perror("posix_memalign");

close(fd);

return 1;

}

// Prepare some data

memset(buffer, 'A', BLOCK_SIZE);

// Write the data directly to disk

bytes_written = write(fd, buffer, BLOCK_SIZE);

if (bytes_written == -1) {

perror("write");

} else {

printf("Successfully wrote %zd bytes using Direct I/O.\n", bytes_written);

}

// Clean up

free(buffer);

close(fd);

unlink(filename); // Delete the test file

return 0;

}The Bottleneck: Serialization in Parallel Overwrites

Modern servers are equipped with multi-core CPUs and fast NVMe storage, making parallel processing essential for high throughput. A common performance strategy is to have multiple threads write to different parts of the same large file simultaneously. While this works well in many scenarios, a specific and painful bottleneck existed within ext4 when performing parallel direct I/O overwrites on pre-allocated files. This issue is a significant topic in recent Linux filesystems news and ext4 news.

Uninitialized Extents: A Double-Edged Sword

Ext4 uses an “extent” based system to track file data on disk. An extent is a contiguous range of blocks. For performance, when a large file is created, ext4 can use a feature called “uninitialized extents” or pre-allocation. This reserves the space on disk without actually writing zeros to it, making file creation much faster. The blocks are marked as “uninitialized” in the filesystem metadata. When data is later written to these blocks for the first time, they are converted to “initialized” extents.

The Locking Contention Problem

The problem arose from how ext4 handled this conversion process. To maintain data consistency, ext4 used a global inode-level read/write semaphore (i_rwsem) when converting an uninitialized extent to an initialized one. When one thread started a direct I/O write to a pre-allocated region, it would acquire an exclusive write lock on the file’s inode. If other threads tried to write to *any other part* of the same file simultaneously, they would be blocked, waiting for the first thread to release the lock.

This effectively serialized all parallel writes, completely negating the benefits of multi-core processors and fast storage. A 16-core server trying to perform 16 parallel writes would behave as if it had only a single core. This performance cliff was a major concern for database and virtualization workloads, impacting everything from Rocky Linux news to Debian news for enterprise users.

You could benchmark and expose this bottleneck using a tool like fio (Flexible I/O Tester), a staple in Linux performance analysis.

# First, create a large pre-allocated file

fallocate -l 10G testfile.img

# Now, run a parallel direct I/O overwrite test with fio

# This command simulates 16 parallel jobs writing 4k blocks

fio --name=parallel-dio-overwrite \

--filename=testfile.img \

--direct=1 \

--rw=randwrite \

--bs=4k \

--ioengine=libaio \

--iodepth=32 \

--numjobs=16 \

--group_reporting \

--size=10GOn an older kernel, running this test would yield surprisingly low throughput, as the 16 jobs would spend most of their time waiting for the inode lock rather than writing data.

The Breakthrough: Unleashing True Parallelism in the Linux Kernel

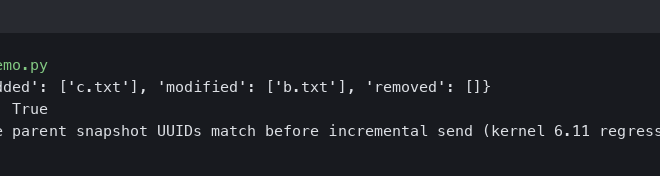

The solution, introduced in the Linux 6.5 kernel, is an elegant and impactful change to the ext4 filesystem driver. This update is a highlight in recent Linux kernel news and is set to be adopted by all major distributions, from rolling releases like Arch Linux news to stable enterprise platforms covered in Red Hat news.

The core of the patch refactors the I/O submission path. Instead of holding the exclusive inode lock for the entire duration of the data copy and extent conversion, the new logic performs these operations with more granular locking. The process now looks something like this:

- A thread initiates a direct I/O write to an uninitialized region.

- A brief lock is taken to handle the metadata work of converting the uninitialized extent to an initialized one.

- Crucially, the lock is released before the expensive, time-consuming data transfer from the user’s buffer to the disk begins.

- This allows other threads to acquire the lock, update metadata for their respective regions, and initiate their own data transfers in parallel.

By decoupling the short metadata update from the long data copy, the serialization point is effectively eliminated. The result is a near-linear scaling of performance with the number of CPU cores. In some test cases, performance has been shown to increase by over 100x, going from single-digit MB/s to several GB/s on modern hardware. This is not just an incremental improvement; it’s a transformative one.

To witness this improvement, you would run the exact same fio command as before, but on a system running Linux kernel 6.5 or newer. The reported bandwidth would be dramatically higher, reflecting the true parallel capability of the underlying hardware.

Real-World Impact and Best Practices

This kernel enhancement has far-reaching implications. The best part is that it’s a transparent improvement—no application changes or system reconfigurations are needed. Simply updating the kernel on your favorite distribution, whether it’s Ubuntu, Fedora, openSUSE, or others, will enable this new capability.

Who Benefits Most?

- Database Administrators: Systems like PostgreSQL and MySQL, which often pre-allocate large data files and use direct I/O, will see significant performance gains during heavy write loads and data imports. This is major PostgreSQL Linux news.

- Virtualization and Cloud Platforms: Hypervisors like KVM and container runtimes like Docker and Podman writing to large, pre-allocated virtual disk images or volumes will experience much lower I/O latency and higher throughput. This is important KVM news and Linux containers news.

- HPC and Scientific Computing: Applications that process massive datasets stored in single, large files will be able to leverage parallel I/O more effectively.

Verification and Simple Testing

First, verify your kernel version. A simple command will tell you what you’re running:

uname -rLook for a version number of 6.5 or higher. For a quick, albeit less scientific, test than fio, you can use the dd command. The following script runs several dd instances in the background to simulate parallel writes.

#!/bin/bash

# A simple script to demonstrate parallel direct I/O writes with dd

FILENAME="dd_parallel_test.img"

FILESIZE_GB=4

BLOCK_SIZE="1M"

COUNT=256 # Total MB per process: 1M * 256 = 256MB

NUM_JOBS=4

# Pre-allocate the file

echo "Pre-allocating a ${FILESIZE_GB}GB file..."

fallocate -l ${FILESIZE_GB}G $FILENAME

echo "Starting ${NUM_JOBS} parallel dd jobs..."

START_TIME=$SECONDS

for i in $(seq 1 $NUM_JOBS)

do

# Each job writes to a different offset in the file

OFFSET=$(( (i-1) * COUNT ))

# Run dd in the background

# oflag=direct enables Direct I/O

# seek=N skips N blocks from the beginning of the output file

dd if=/dev/zero of=$FILENAME bs=$BLOCK_SIZE count=$COUNT seek=$OFFSET oflag=direct conv=notrunc &

done

# Wait for all background jobs to finish

wait

ELAPSED_TIME=$(($SECONDS - $START_TIME))

echo "All jobs completed in ${ELAPSED_TIME} seconds."

# Clean up

rm $FILENAMERunning this script on a kernel before the patch and then again on a kernel with the patch will show a dramatic reduction in the total time taken to complete the writes.

Conclusion: A Testament to Open Source Innovation

The massive performance boost for parallel direct I/O overwrites in ext4 is a perfect example of the power of the open-source development model. A persistent, highly technical bottleneck that impacted performance-critical applications was identified, analyzed, and solved by the community. This change reinforces ext4’s position as a reliable, high-performance, and continually improving filesystem for the vast majority of Linux users.

As this update rolls out across the ecosystem—from server distributions like AlmaLinux and CentOS Stream to desktop favorites like Linux Mint and Pop!_OS—users with I/O-intensive workloads stand to gain a significant, “free” performance upgrade. It’s a powerful reminder that even in mature technologies like ext4, there is always room for innovation that pushes the boundaries of what’s possible with Linux.