A Deep Dive into Linux Clustering: A Practical Guide to Pacemaker and Corosync for High-Availability Web Servers

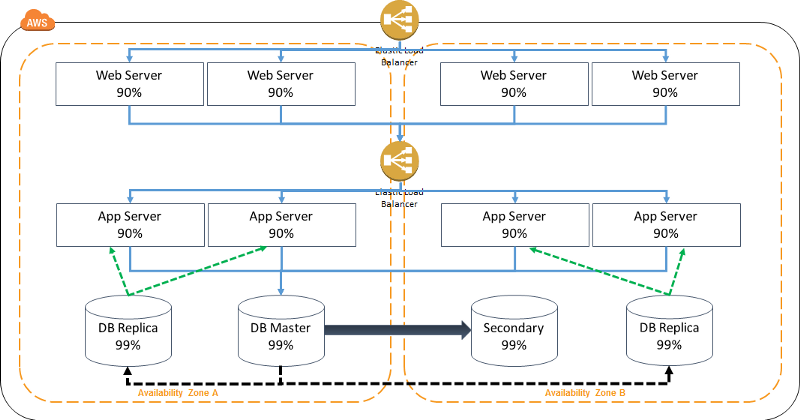

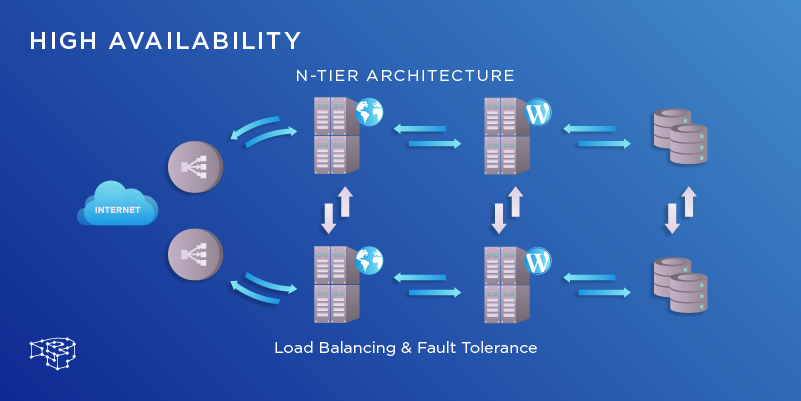

In today’s digital landscape, service uptime is not just a goal; it’s a fundamental requirement. For businesses running critical web applications, even a few minutes of downtime can translate to significant revenue loss and damage to their reputation. This is where the power of Linux clustering comes into play. High-Availability (HA) clusters are the backbone of resilient infrastructure, ensuring that services remain accessible even in the event of a hardware or software failure. While the topic can seem daunting, the modern Linux ecosystem, with its powerful open-source tools, makes building a robust HA cluster more accessible than ever.

This article provides a comprehensive technical guide to building a two-node, high-availability Apache web server cluster. We will explore the core components of the Linux HA stack, focusing on Pacemaker, the cluster resource manager, and Corosync, the underlying messaging layer. Whether you are a system administrator managing enterprise infrastructure on Red Hat or a DevOps engineer deploying on a cloud instance running Ubuntu, the principles and practices discussed here are universally applicable. This is essential reading for anyone following Linux server news and looking to implement fault-tolerant systems using battle-tested, open-source technology.

The Anatomy of a High-Availability Linux Cluster

Before diving into commands and configuration files, it’s crucial to understand the foundational components that make a Linux HA cluster function. The modern Linux HA stack is primarily composed of two key, cooperative services: Corosync and Pacemaker. They each have a distinct but vital role in maintaining service continuity.

The Messaging Layer: Corosync

At the lowest level of the stack is Corosync. Its primary responsibility is to manage cluster membership and provide a reliable messaging bus between all nodes in the cluster. Think of it as the cluster’s nervous system. Corosync handles:

- Membership: It determines which nodes are currently online and part of the cluster.

- Messaging: It provides an ordered and reliable way for nodes to communicate state changes, heartbeats, and other critical information.

- Quorum: This is arguably its most critical function. Quorum is the minimum number of nodes that must be active for the cluster to be considered operational. This mechanism is the primary defense against a “split-brain” scenario, where a network partition could cause two sets of nodes to believe they are the primary cluster, leading to data corruption. In a two-node cluster, special considerations or a third-party “quorum device” are often needed to handle this effectively.

Recent developments in Corosync news have focused on improving performance and scalability, making it a solid foundation for clusters of all sizes.

The Cluster Resource Manager (CRM): Pacemaker

Sitting on top of Corosync is Pacemaker, the brains of the operation. While Corosync knows which nodes are online, Pacemaker decides what to do with that information. As the CRM, Pacemaker is responsible for starting, stopping, and monitoring all the services (or “resources”) that the cluster manages. Key concepts in Pacemaker news and its architecture include:

- Resources: Anything the cluster manages, such as a virtual IP address, an Apache service, a filesystem mount, or a database instance.

- Resource Agents (RAs): These are scripts (typically LSB/systemd init scripts or more complex OCF scripts) that provide a standardized interface for Pacemaker to manage a resource (e.g., start, stop, status).

- Constraints: These are the rules that govern resource behavior. You can define location constraints (where a resource can run), colocation constraints (which resources must run together), and order constraints (the sequence in which resources must start).

- Fencing/STONITH: This stands for “Shoot The Other Node In The Head” and is a non-negotiable component of a production cluster. If a node becomes unresponsive, Pacemaker must have a way to definitively power it off to prevent it from accessing shared resources, thus avoiding data corruption. This is a cornerstone of modern Linux clustering news and best practices.

# Example snippet from a /etc/corosync/corosync.conf file

# This defines the totem protocol, network interface, and node list.

totem {

version: 2

cluster_name: webcluster

transport: knet

crypto_cipher: aes256

crypto_hash: sha256

}

nodelist {

node {

ring0_addr: node1.example.com

name: node1

nodeid: 1

}

node {

ring0_addr: node2.example.com

name: node2

nodeid: 2

}

}

quorum {

provider: corosync_votequorum

two_node: 1

wait_for_all: 1

}

logging {

to_syslog: yes

}Practical Implementation: A Two-Node Apache HA Cluster

Now let’s move from theory to practice. This section will walk through the essential steps to install and configure a basic two-node cluster on a modern Linux distribution like Rocky Linux, AlmaLinux, or CentOS. The commands use the dnf package manager, but equivalents exist for other systems (e.g., `apt` for Debian/Ubuntu, `zypper` for openSUSE). This is relevant for anyone following Rocky Linux news or AlmaLinux news.

Prerequisites and Initial Setup

Before installing any software, ensure your nodes are prepared:

- Hostname Resolution: Each node must be able to resolve the other’s hostname. The simplest way is by adding entries to

/etc/hostson both nodes. - Time Synchronization: All cluster nodes must have their time synchronized. Use NTP or Chrony for this.

- Firewall Configuration: The firewall must allow Corosync communication. You’ll need to open the necessary UDP ports (typically 5404-5405). Alternatively, for a lab setup, you can temporarily disable the firewall.

- Root/Sudo Access: All commands require administrative privileges.

Installing the Clustering Software

The first step is to install the core packages. The `pcs` package provides a unified command-line tool to manage both Pacemaker and Corosync, simplifying administration significantly.

# On both nodes, install the necessary packages

# This command is for RHEL-based systems like CentOS, Rocky, or AlmaLinux

sudo dnf install -y pacemaker pcs corosync fence-agents-all httpd

# Enable and start the pcsd service, which pcs uses to sync configurations

sudo systemctl enable --now pcsd

# Set a password for the 'hacluster' user created by the installation

# Use the same password on both nodes

echo "YourSecurePassword" | sudo passwd --stdin haclusterConfiguring the Cluster

With the software installed, you can now use `pcs` to build the cluster. This process involves authenticating the nodes to each other and then defining the cluster itself.

# Run these commands from only ONE of the nodes (e.g., node1)

# Authenticate the nodes to each other using the 'hacluster' user

# Replace node1 and node2 with your actual hostnames

sudo pcs host auth node1 node2 -u hacluster -p YourSecurePassword

# Create the cluster. The name 'webcluster' is arbitrary.

sudo pcs cluster setup webcluster node1 node2

# Start the cluster services on both nodes

sudo pcs cluster start --all

# Enable the cluster services to start on boot

sudo pcs cluster enable --all

# Verify the status

sudo pcs statusAfter running these commands, you should see both nodes listed as “Online” in the `pcs status` output. You now have a functioning, albeit empty, cluster.

Defining and Managing Cluster Resources

A cluster without resources is just a collection of communicating servers. The next step is to tell Pacemaker what services it needs to manage. For our web server cluster, we need two primary resources: a floating virtual IP (VIP) that clients will connect to, and the Apache service itself.

Creating the Virtual IP and Apache Resources

We will use `pcs` to create these resources. The `IPaddr2` resource agent manages the VIP, and the `apache` agent (part of the `ocf:heartbeat` provider) manages the `httpd` service.

# Run these commands from only ONE node

# Create a resource for the floating Virtual IP address

# Clients will connect to 192.168.1.100

sudo pcs resource create ClusterVIP ocf:heartbeat:IPaddr2 ip=192.168.1.100 cidr_netmask=24 op monitor interval=30s

# Create a resource for the Apache web server

# This uses the systemd agent to manage the httpd service

sudo pcs resource create WebServer ocf:heartbeat:apache configfile=/etc/httpd/conf/httpd.conf statusurl="http://localhost/server-status" op monitor interval=30s

# At this point, check 'pcs status'. You will see the resources running,

# but they might be on different nodes, which is not what we want.Applying Constraints for Predictable Behavior

To ensure our web service functions correctly, we must enforce two rules: the VIP and the Apache service must always run on the same node, and the VIP must be active *before* Apache tries to bind to it. This is where Pacemaker’s constraints come in.

- Colocation Constraint: This forces `WebServer` and `ClusterVIP` to run on the same physical node.

- Order Constraint: This ensures `ClusterVIP` starts before `WebServer`.

We can group the resources to make applying constraints easier and more logical.

# Run these commands from only ONE node

# Group the two resources together. This implicitly creates colocation and order constraints.

# The VIP will be started first, followed by the WebServer.

sudo pcs resource group add WebServerGroup ClusterVIP WebServer

# Verify the final cluster status

sudo pcs status

After adding the group, `pcs status` will show the `WebServerGroup` running on one of the nodes. You can now test failover by running `sudo pcs node standby node1` (assuming the resources are on node1). You will see Pacemaker gracefully stop the resources on node1 and start them on node2. Your service remains available via the floating IP address throughout this process.

Fencing, Maintenance, and Best Practices

A cluster without fencing is a risk. As discussed, fencing is the mechanism that isolates a faulty node to prevent data corruption. This is a critical topic in Linux security news and high-availability architecture.

The Critical Role of Fencing (STONITH)

In a production environment, you MUST configure a fencing device. This could be an IPMI interface on a physical server, an API call to a hypervisor (relevant to KVM news or Proxmox news), or a watchdog device. For a lab or testing environment where no physical fencing device is available, you can disable it, but with the strong caveat that this is unsafe for production.

To disable STONITH for testing purposes only:

sudo pcs property set stonith-enabled=falseDisabling this removes the cluster’s primary protection against split-brain scenarios. The cluster will still perform failovers for clean shutdowns or resource failures, but it cannot handle a node that becomes unresponsive but is not truly offline.

Best Practices for Production Clusters

- Use a Dedicated Network: Configure Corosync to use a dedicated, redundant network link (a “ring”) for cluster communication to avoid interference from application traffic.

- Implement Robust Fencing: This cannot be overstated. Choose a reliable fencing method appropriate for your environment.

- Test Failover Regularly: Don’t wait for a real failure to discover a configuration issue. Regularly and safely test failover scenarios.

- Monitor and Alert: Integrate your cluster into a monitoring system like Prometheus and Grafana. Track resource status, quorum changes, and node health. This is a key practice in Linux observability news.

- Use Maintenance Mode: When performing system updates or other maintenance, put the node into standby or maintenance mode (`pcs node standby <nodename>`) to prevent accidental failovers.

Conclusion

Building a high-availability Linux cluster with Pacemaker and Corosync provides a powerful, flexible, and cost-effective solution for ensuring the continuous operation of critical services. We’ve journeyed from the foundational concepts of the messaging layer and resource management to a practical, step-by-step implementation of a two-node Apache web server cluster. By understanding resources, constraints, and the absolute necessity of fencing, you can build resilient infrastructure that stands up to failures.

The journey doesn’t end here. The next steps could involve exploring more complex setups, such as integrating shared storage with DRBD, clustering databases like PostgreSQL or MariaDB, or deploying multi-node clusters. The skills you’ve learned form the bedrock of modern Linux administration news and Linux DevOps practices. As open-source HA technology continues to evolve, mastering these tools will remain a valuable asset for any systems professional dedicated to reliability and uptime.