Elevating MySQL Availability: The Era of Live Patching and Zero-Downtime Maintenance on Linux

Introduction: The Imperative of Continuous Uptime

In the rapidly evolving landscape of **Linux server news**, one requirement remains constant for database administrators (DBAs) and DevOps engineers: uptime. As organizations increasingly rely on data-driven decision-making, the tolerance for maintenance windows is shrinking to near zero. Traditionally, applying critical security updates to the Linux kernel or the database engine itself required a scheduled restart, disrupting services and potentially causing revenue loss. However, recent advancements in **Linux database news** highlight a paradigm shift toward live patching and zero-downtime maintenance strategies for MySQL architectures.

Whether you are running **Ubuntu news**-worthy LTS releases, enterprise-grade **Red Hat news** ecosystems, or community-driven **Rocky Linux news** and **AlmaLinux news** setups, the goal is the same: maintain data integrity and availability while mitigating security risks. This article delves into the technicalities of modern MySQL administration on Linux, exploring how live patching concepts, robust schema design, and failover orchestration contribute to a resilient infrastructure. We will explore how these methodologies intersect with **Linux security news**, ensuring that vulnerabilities are patched without the dreaded `systemctl restart mysql`.

From **Debian news** regarding stability to **Arch Linux news** about bleeding-edge kernels, the Linux ecosystem provides the foundation. We will look at how to leverage this foundation using practical SQL examples, covering schema optimization, transaction safety, and indexing strategies that complement a high-availability (HA) Linux environment.

Section 1: Architecting MySQL for Resilience on Linux

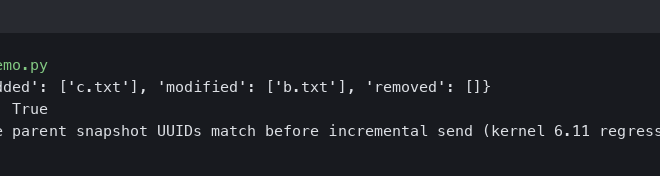

To achieve a state where maintenance does not equal downtime, the underlying architecture must be robust. This begins with the file system and storage layer. In recent **Linux filesystems news**, the debate between **ext4 news**, **XFS**, and **ZFS news** continues. For MySQL workloads, XFS is often preferred on **CentOS news** and RHEL derivatives due to its handling of large files and parallel I/O capabilities. However, **Btrfs news** suggests growing maturity for snapshot capabilities which can aid in instant backups.

When configuring MySQL on Linux, understanding the interaction between the database and the OS kernel is vital. **Linux kernel news** frequently discusses scheduler improvements and memory management updates that directly impact database performance. A resilient architecture also involves partitioning data to make maintenance operations, such as dropping old data, instantaneous and lock-free.

Below is a practical example of designing a schema with partitioning. This approach allows DBAs to manage large datasets efficiently, a crucial aspect of maintaining performance without downtime.

-- Creating a robust table structure with Range Partitioning

-- This allows for efficient data rotation without table-locking deletes

CREATE TABLE server_logs (

log_id BIGINT UNSIGNED NOT NULL AUTO_INCREMENT,

server_name VARCHAR(64) NOT NULL,

log_level ENUM('INFO', 'WARN', 'ERROR', 'CRITICAL') NOT NULL,

message TEXT,

created_at DATETIME NOT NULL,

PRIMARY KEY (log_id, created_at),

INDEX idx_server_time (server_name, created_at)

) ENGINE=InnoDB

PARTITION BY RANGE (YEAR(created_at)) (

PARTITION p2023 VALUES LESS THAN (2024),

PARTITION p2024 VALUES LESS THAN (2025),

PARTITION p2025 VALUES LESS THAN (2026),

PARTITION p_future VALUES LESS THAN MAXVALUE

);

-- To verify how the optimizer uses partitions:

EXPLAIN SELECT * FROM server_logs

WHERE created_at BETWEEN '2024-01-01' AND '2024-01-31';By utilizing partitioning, you align your database strategy with **Linux administration news** best practices: reducing I/O overhead and keeping the system responsive. This is particularly relevant when running on cloud infrastructure, a hot topic in **AWS Linux news** and **Google Cloud Linux news**, where IOPS are a billed resource.

Furthermore, the choice of Linux distribution impacts your maintenance strategy. **Fedora news** often previews features that eventually land in Enterprise Linux. If you are using **Oracle Linux news**, you might be leveraging the Unbreakable Enterprise Kernel (UEK) which is specifically tuned for database workloads. Regardless of the distro—be it **Linux Mint news** for local dev or **SUSE Linux news** for enterprise SAP workloads—the principle of decoupling data management from OS maintenance is key.

Section 2: Security, Live Patching, and Access Control

The driving force behind the push for live patching is security. **Linux security news** is filled with reports of vulnerabilities (CVEs) in libraries like glibc, OpenSSL, or the kernel itself. In a traditional setup, patching these requires a reboot. However, technologies discussed in **Linux kernel news**—such as kpatch (Red Hat) or kGraft (SUSE), and third-party solutions—allow kernel code to be swapped in memory without halting processes.

For MySQL, this means the database process (`mysqld`) continues serving queries while the underlying OS is patched. This is a game-changer for **Linux DevOps news** and **SRE** teams. However, live patching the OS is only half the battle. You must also secure the database layer itself.

Implementing “Least Privilege” is a standard discussed in **Linux certification news** (like **RHCSA news** or **CompTIA Linux+ news**). You should never run your application as root, and your database users should have granular permissions. This minimizes the blast radius if a vulnerability is exploited before a live patch is applied.

Here is an example of setting up a secure, limited-access user for a specific microservice, a common pattern in **Docker Linux news** and **Kubernetes Linux news** environments:

-- Best Practice: Creating a dedicated user with limited scope

-- Avoid using 'root' for application connections

CREATE USER 'app_service_user'@'10.0.0.%' IDENTIFIED BY 'Str0ng_P@ssw0rd!';

-- Grant only necessary privileges on specific databases

GRANT SELECT, INSERT, UPDATE, DELETE ON app_production_db.* TO 'app_service_user'@'10.0.0.%';

-- Prevent this user from altering schema or managing other users

REVOKE ALTER, DROP, GRANT OPTION ON *.* FROM 'app_service_user'@'10.0.0.%';

-- Security Audit: Check for accounts with no password or excessive privileges

SELECT user, host, authentication_string

FROM mysql.user

WHERE authentication_string = '';

-- Enforce password expiration policies (Linux security best practice)

ALTER USER 'app_service_user'@'10.0.0.%' PASSWORD EXPIRE INTERVAL 90 DAY;Integrating these SQL practices with **Linux firewall news** tools like **iptables news** or **nftables news** creates a defense-in-depth strategy. Even if the database is live-patched, network segmentation via **WireGuard news** or **OpenVPN news** ensures that only authorized traffic reaches the database port.

Section 3: Advanced Transactions and High Availability

![Xfce desktop screenshot - xfce:4.12:getting-started [Xfce Docs]](https://linux-digest.com/wp-content/uploads/2025/12/inline_84dfb139.jpg)

Live patching handles the OS and binary updates, but what about logical data updates? In **Linux development news**, the concept of CI/CD (Continuous Integration/Continuous Deployment) often involves database migrations. Tools mentioned in **Jenkins Linux news** or **GitLab CI news** pipelines automate these schema changes.

To ensure zero downtime during these logical updates, DBAs utilize online DDL (Data Definition Language) features in MySQL and wrap data modifications in robust transactions. This is essential in **Linux banking** or **Linux ecommerce** sectors where data consistency is paramount.

Furthermore, high availability often relies on clustering. **Linux clustering news** frequently mentions **Pacemaker news** and **Corosync news** for managing resources. In a modern context, this might be handled by **Orchestrator** or **Galera Cluster**. When a node *does* need to go down (perhaps for a major version upgrade that live patching can’t handle), the system must failover seamlessly.

The following code demonstrates a transactional approach to data manipulation that ensures integrity, which is critical when nodes might be switching roles in a **Linux virtualization news** environment (like **KVM news** or **Proxmox news**).

-- Transactional Integrity Example: Funds Transfer

-- Ensures ACID compliance (Atomicity, Consistency, Isolation, Durability)

START TRANSACTION;

-- Step 1: Deduct from sender

UPDATE accounts

SET balance = balance - 100.00

WHERE account_id = 54321

AND balance >= 100.00; -- Application-level check

-- Check if the update actually affected a row

-- In application code (Python/Go/PHP), you would check row_count here.

-- If row_count == 0, ROLLBACK immediately.

-- Step 2: Add to receiver

UPDATE accounts

SET balance = balance + 100.00

WHERE account_id = 98765;

-- Step 3: Log the transaction for audit (crucial for Linux forensics news)

INSERT INTO transaction_logs (source_id, dest_id, amount, status, log_time)

VALUES (54321, 98765, 100.00, 'COMPLETED', NOW());

-- Finalize

COMMIT;This transactional safety is vital when running on distributed storage systems mentioned in **Ceph news** or **GlusterFS news**. If a network partition occurs (a topic common in **Linux networking news**), the database must either commit fully or roll back fully to prevent data corruption.

Section 4: Performance Tuning, Monitoring, and Best Practices

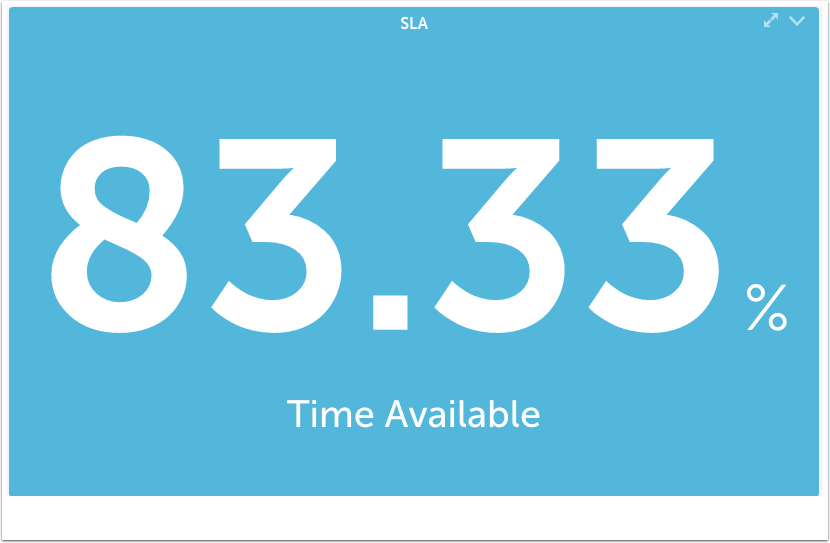

Even with live patching and HA setups, a poorly performing query can bring a server to its knees just as effectively as a power outage. **Linux performance news** often highlights the importance of observability tools. Integrating MySQL with **Prometheus news** and **Grafana news** provides real-time insight into query latency, buffer pool usage, and disk I/O.

For **Linux programming news** followers using **Python Linux news** or **Go Linux news** to build backends, understanding how the database executes your code is mandatory. The `EXPLAIN` statement is your primary tool here. It helps you visualize how MySQL interacts with the storage engine, which is heavily influenced by the underlying Linux OS configuration (swappiness, huge pages, etc.).

Here is an example of analyzing a query and optimizing it with an index, a fundamental skill for anyone following **Linux database news**:

-- Scenario: Slow search on a user email column

-- Original Query Analysis

EXPLAIN ANALYZE SELECT id, username, email

FROM users

WHERE email = '[email protected]';

-- If the above shows "Table scan", we need an index.

-- Creating an index online (No locking for read/write)

ALTER TABLE users

ADD INDEX idx_email (email),

ALGORITHM=INPLACE,

LOCK=NONE;

-- Re-running the analysis to verify performance gain

-- The output should now indicate "Index lookup" instead of "Table scan"

EXPLAIN SELECT id, username, email

FROM users

WHERE email = '[email protected]';Best Practices for the Modern Linux DBA

1. **Automate Everything:** Use tools from **Ansible news** or **Terraform Linux news** to provision and configure your database servers. This ensures consistency across your **Dev**, **Stage**, and **Prod** environments.

2. **Backup Strategies:** Follow **Linux backup news** closely. Tools like **Percona XtraBackup** or **Mariabackup** allow for hot backups. Integrate these with **rsync news** or **Borgbackup news** for offsite storage.

3. **Kernel Tuning:** Adjust `vm.swappiness` and disable Transparent Huge Pages (THP) for MySQL workloads. Keep an eye on **Linux memory management news** for updates on how the kernel handles dirty pages.

4. **Stay Updated:** Whether you use **apt news**, **dnf news**, or **pacman news**, keep your package managers configured to pull security updates. If you cannot reboot, investigate live patching services.

5. **Containerization:** If you are following **Podman news** or **Docker Swarm news**, ensure your database data persists on volumes and that you have resource limits (cgroups) configured correctly to prevent the DB from consuming all host memory.

Conclusion

The landscape of **MySQL Linux news** is shifting towards a model where downtime is an anomaly rather than a scheduled event. The convergence of **Linux kernel live patching**, advanced database replication technologies, and sophisticated orchestration tools allows organizations to maintain high security standards without sacrificing availability.

From the desktop enthusiast reading **KDE Plasma news** or **GNOME news** to the enterprise architect focused on **Azure Linux news**, the principles remain the same: robust architecture, defense-in-depth security, and rigorous performance tuning. By leveraging the power of the Linux command line—mastering tools from **grep news** to **htop news**—and combining them with deep SQL knowledge, administrators can build database systems that are as resilient as the Linux kernel itself.

As we look to the future, with **Linux AI news** and **Linux edge computing news** gaining traction, the database will continue to be the heart of the infrastructure. Ensuring that heart keeps beating, even during maintenance, is the ultimate challenge and triumph of the modern Linux professional.