Istio’s Latest Evolution: Embracing Gateway API, Ambient Mesh, and Simplified Multi-Cluster Management

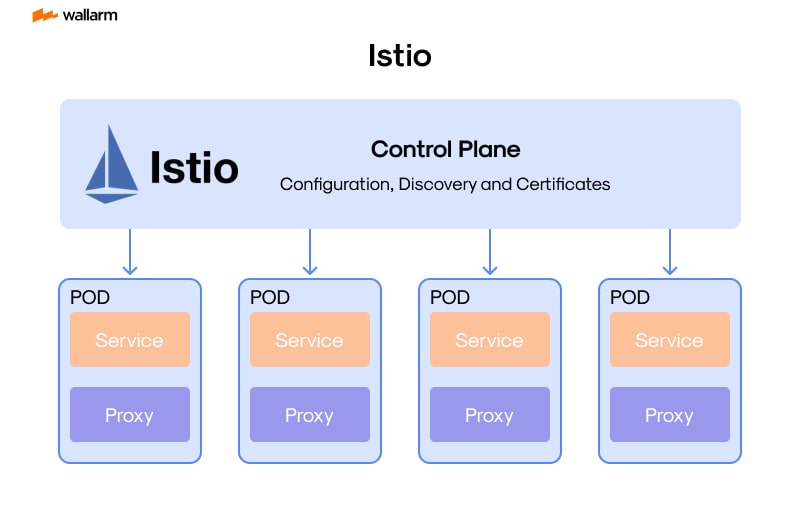

In the dynamic world of cloud-native computing and microservices, Istio has long stood as a cornerstone technology, providing a robust and feature-rich service mesh for managing complex application networks. For DevOps professionals, SREs, and platform architects working within the Linux ecosystem, keeping pace with Istio’s evolution is crucial for building resilient, secure, and observable systems. The latest developments in Istio represent a significant leap forward, focusing on standardization, operational simplicity, and performance. This isn’t just an incremental update; it’s a strategic enhancement that addresses key community feedback and solidifies Istio’s position as a leader in the service mesh space.

This article delves into the most impactful recent changes in Istio. We’ll explore the official adoption of the Kubernetes Gateway API, the maturation of the sidecarless Ambient Mesh, drastically simplified integration for virtual machines and multi-cluster topologies, and powerful new security configurations. These updates are not merely theoretical; they have profound implications for anyone managing services on Kubernetes, impacting everything from traffic management and security posture to resource consumption. This is essential reading for those following Istio news and the broader landscape of Kubernetes Linux news and Linux DevOps news.

The Gateway API Comes of Age in Istio

For years, managing ingress traffic in Kubernetes has been a point of friction. The original Ingress API, while functional for simple use cases, lacked the expressiveness needed for complex routing, advanced traffic splitting, and fine-grained TLS configuration that modern microservice architectures demand. This led to a proliferation of vendor-specific annotations and custom resources. The Kubernetes Gateway API is the community-driven successor, designed to solve these problems with a flexible, role-oriented, and highly extensible model. Istio’s full-fledged support for this API is a game-changer.

From Ingress to a Role-Oriented Future

The Gateway API decouples the definition of infrastructure from application routing. This is achieved through a set of distinct resources:

- GatewayClass: A cluster-scoped resource that defines a template for Gateways, often managed by the cluster administrator.

- Gateway: Deployed by a platform or infrastructure team, this resource requests a listening point on the network (like a load balancer) and configures ports and TLS settings.

- HTTPRoute (or other route types): Managed by application developers, this resource binds to a Gateway and defines the rules for routing traffic to specific services based on hostnames, paths, headers, and more.

This separation of concerns is a massive win for organizational scaling. Infrastructure teams can manage the lifecycle of load balancers and certificates via the Gateway, while application teams can safely manage their own traffic rules via HTTPRoute without needing cluster-wide permissions. This aligns perfectly with modern GitOps workflows, where tools like Argo CD or Flux can manage these declarative configurations, a key topic in Linux CI/CD news.

Practical Implementation: Configuring a Gateway

Let’s see how this works in practice. Here is a complete example of deploying a simple application and exposing it using the Gateway API with Istio. First, the Gateway resource defines the entry point, listening on port 80.

apiVersion: gateway.networking.k8s.io/v1beta1

kind: Gateway

metadata:

name: my-app-gateway

namespace: istio-ingress

spec:

gatewayClassName: istio

listeners:

- name: http

port: 80

protocol: HTTP

allowedRoutes:

namespaces:

from: Selector

selector:

matchLabels:

kubernetes.io/metadata.name: defaultNext, an application team in the default namespace can create an HTTPRoute to direct traffic for myapp.example.com to their service.

apiVersion: gateway.networking.k8s.io/v1beta1

kind: HTTPRoute

metadata:

name: my-app-route

namespace: default

spec:

parentRefs:

- name: my-app-gateway

namespace: istio-ingress

hostnames: ["myapp.example.com"]

rules:

- matches:

- path:

type: PathPrefix

value: /

backendRefs:

- name: my-app-service

port: 8080This declarative approach is clean, portable, and aligns with the future of Kubernetes networking. It simplifies automation with tools like Ansible or Terraform Linux news, as the API is standardized across different implementations.

Ambient Mesh: The Sidecarless Future Matures

The traditional sidecar proxy model, where an Envoy proxy is injected into every application pod, has been the foundation of Istio’s power. However, it’s not without its drawbacks: resource overhead, complex pod lifecycle management, and traffic interception complexities. Ambient Mesh is Istio’s innovative answer—a sidecarless architecture that promises to reduce operational complexity and resource footprint without sacrificing core service mesh capabilities.

Architecture Recap: Ztunnels and Waypoint Proxies

Ambient Mesh splits functionality into two main components:

- Ztunnel: A per-node agent (running as a DaemonSet) that handles all L4 traffic for pods on that node. It provides secure overlay networking, mTLS, and L4 observability and authorization policies. This provides a zero-trust baseline with minimal overhead.

- Waypoint Proxy: An optional, on-demand L7 Envoy proxy deployed per service account. When a service requires advanced L7 policies (like HTTP-based routing, retries, or fault injection), a Waypoint proxy is provisioned to handle that traffic.

This layered approach means that services that only need mTLS and basic network security don’t pay the resource cost of a full L7 proxy. Recent updates have focused on hardening this architecture, improving performance, and bringing its feature set closer to parity with the sidecar model, making it a viable option for production workloads. This is a significant development in the world of Linux containers news and Podman news, as it rethinks the fundamental unit of service mesh deployment.

Enabling and Securing an Ambient Namespace

Getting started with Ambient Mesh is straightforward. You can enable it for a specific namespace with a simple label:

kubectl label namespace default istio.io/dataplane-mode=ambient

Once enabled, the ztunnel on each node will automatically handle traffic for pods in that namespace, establishing mTLS connections. You can then apply L4 authorization policies. For example, the following policy allows traffic from the frontend service account to the backend service account, enforced by the ztunnel.

apiVersion: security.istio.io/v1beta1

kind: AuthorizationPolicy

metadata:

name: backend-access-control

namespace: default

spec:

selector:

matchLabels:

app: backend

action: ALLOW

rules:

- from:

- source:

principals:

- "cluster.local/ns/default/sa/frontend"This provides a strong security posture at the network layer, complementing other Linux security mechanisms like SELinux or AppArmor, and is a key topic in Linux security news.

Simplifying the Hybrid World: VMs and Multi-Cluster Integration

Few enterprises operate exclusively on Kubernetes. A common reality is a hybrid environment with critical services running on traditional virtual machines across various Linux distributions like Ubuntu news, Red Hat news, or Debian news. Integrating these VMs into the service mesh has historically been a complex and manual process. Recent Istio updates have dramatically simplified this, making a unified mesh across Kubernetes and VMs a practical reality.

Bringing Virtual Machines into the Mesh

The new approach streamlines the process of bootstrapping a VM to join the Istio mesh. It simplifies the generation of configuration and identity tokens, reducing the manual steps required by an administrator. Once a VM is part of the mesh, it behaves like any other workload. It gets a cryptographic identity via SPIFFE, participates in mTLS, and can be targeted by Istio’s traffic management and security policies.

Example: Registering a VM Workload

To make a service running on a VM discoverable within the Kubernetes-based mesh, you create WorkloadEntry and ServiceEntry resources. The ServiceEntry defines a new service in Istio’s internal registry, while the WorkloadEntry associates the VM’s IP address and identity with that service.

apiVersion: networking.istio.io/v1alpha3

kind: ServiceEntry

metadata:

name: legacy-database-vm

spec:

hosts:

- "legacy-db.internal"

ports:

- number: 5432

name: tcp-postgres

protocol: TCP

resolution: STATIC

location: MESH_INTERNAL

---

apiVersion: networking.istio.io/v1alpha3

kind: WorkloadEntry

metadata:

name: vm-postgres-instance-1

spec:

address: 192.168.10.5 # IP of the VM

labels:

app: legacy-database

security.istio.io/tlsMode: istio

serviceAccount: legacy-db-saWith this configuration, a pod in your Kubernetes cluster can now securely connect to legacy-db.internal:5432, and Istio will automatically handle mTLS encryption and policy enforcement. This is invaluable for modernizing legacy applications and is a major development for those following Linux server news and Linux virtualization news, including technologies like KVM and Proxmox.

Hardening the Mesh: Advanced Security and Best Practices

Security remains a primary driver for adopting a service mesh. Istio continues to enhance its security capabilities, providing fine-grained control over service-to-service communication and helping organizations implement a zero-trust network architecture.

Fine-Grained Authorization with JWTs

Istio’s AuthorizationPolicy is incredibly powerful. Beyond simple service-to-service rules, it can enforce policies based on the properties of a JSON Web Token (JWT) presented by the client. This is essential for securing APIs and implementing user-level authentication and authorization.

The following example demonstrates an AuthorizationPolicy that only allows access to the billing-api if the request includes a valid JWT containing a specific claim, such as membership in the ‘finance’ group.

apiVersion: security.istio.io/v1

kind: AuthorizationPolicy

metadata:

name: billing-api-jwt-policy

namespace: services

spec:

selector:

matchLabels:

app: billing-api

action: ALLOW

rules:

- to:

- operation:

methods: ["GET", "POST"]

paths: ["/v1/billing/*"]

when:

- key: request.auth.claims[groups]

values: ["finance"]This level of control, enforced at the network edge by the Envoy proxy, offloads complex authorization logic from the application code, making services simpler and more secure. It’s a powerful tool that complements traditional Linux firewall news topics like iptables and nftables by operating at Layer 7.

Best Practices for a Secure Mesh

- Enforce Strict mTLS: Use a mesh-wide

PeerAuthenticationpolicy to enforce strict mutual TLS, ensuring that all traffic within the mesh is encrypted and authenticated by default. - Use `istioctl analyze`: Regularly run

istioctl analyzein your CI/CD pipeline to proactively detect security misconfigurations and potential vulnerabilities in your Istio resources. - Least Privilege: Apply the principle of least privilege to your

AuthorizationPolicyresources. Start with a default-deny policy and explicitly allow required communication paths. - Monitor and Audit: Leverage Istio’s deep integration with observability tools like Prometheus and Grafana, as well as tracing tools like Jaeger, to monitor for anomalous traffic patterns and audit access logs.

Conclusion

The latest advancements in Istio are a clear signal of the project’s maturity and its commitment to addressing real-world operational challenges. The full embrace of the Kubernetes Gateway API provides a standardized, future-proof foundation for traffic management. The continued development of Ambient Mesh offers a compelling, lower-overhead alternative to the traditional sidecar model, making service mesh adoption more accessible. Furthermore, the simplified experiences for integrating virtual machines and managing multi-cluster deployments directly address the hybrid-cloud reality faced by many organizations.

For anyone in the Linux open source news community, particularly those involved in cloud-native infrastructure, these updates are not to be overlooked. They represent a significant step toward a more usable, performant, and secure service mesh. The next step is to begin experimenting with these features in a non-production environment, explore the official documentation, and consider how they can be leveraged to simplify and secure your own microservice architectures.