Kubernetes 1.29 Deep Dive: What the Latest Release Means for the Linux Ecosystem

The Symbiotic Relationship: How Kubernetes 1.29 Redefines Linux Container Orchestration

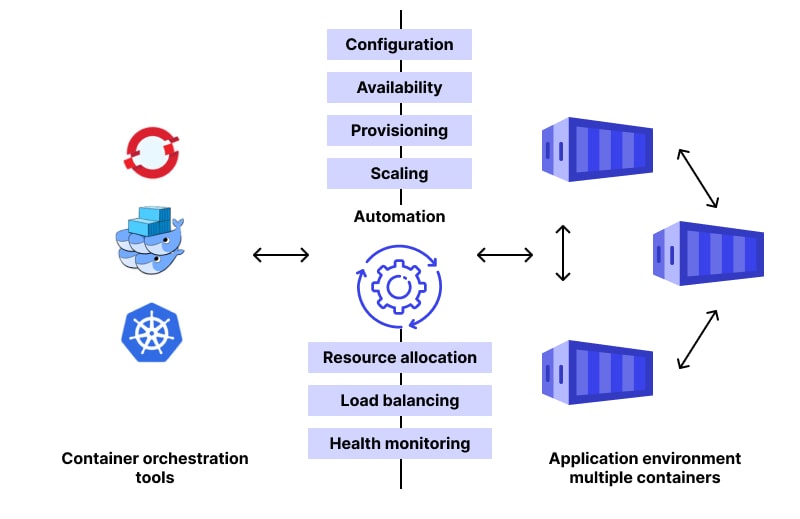

The world of cloud-native computing is in a constant state of evolution, and at its heart lies the powerful duo of Kubernetes and Linux. Each new Kubernetes release brings a wave of features, deprecations, and enhancements that ripple through the entire Linux ecosystem, impacting everything from kernel-level operations to system administration practices. The recent arrival of Kubernetes 1.29, nicknamed “Mandala,” is no exception. This release isn’t just an incremental update; it’s a significant milestone that deepens the integration with underlying Linux technologies, enhances security postures, and streamlines operations for DevOps professionals and system administrators alike.

For those tracking Kubernetes Linux news, this version solidifies trends that have been building for years, particularly around security, networking, and the container runtime interface. It moves beyond simply running containers on a Linux host to leveraging advanced Linux kernel features like eBPF, seccomp, and modern filesystems more natively. This article provides a comprehensive technical deep dive into Kubernetes 1.29, exploring its key features from the perspective of a Linux professional. We’ll examine how these changes affect everything from major distributions like Ubuntu, Red Hat, and Debian to the tools and practices that define modern Linux DevOps news and administration.

Section 1: Core Enhancements and Their Linux Foundations

Kubernetes 1.29 introduces several headline features that are deeply rooted in Linux process and resource management. Understanding these requires looking beneath the Kubernetes API and into the Linux kernel and system services that make it all possible.

Native Sidecar Containers Graduate to Beta

One of the most anticipated features, native sidecar support, has moved to Beta and is now enabled by default. Previously, managing sidecar containers (like logging agents or service mesh proxies) was a clunky process involving workarounds to control startup and shutdown order. This often led to race conditions and complex pod lifecycle management.

With native sidecars, Kubernetes now understands the concept of an “init container” that can run alongside the main application container. This is managed through the restartPolicy: Always field for an init container. At the Linux level, this means the kubelet, the primary Kubernetes agent on each node, can now more intelligently manage the lifecycle of container processes. It ensures that sidecars are started before the main application containers and are the last to be terminated, providing a more reliable foundation for complex application architectures. This is crucial Linux containers news, as it simplifies patterns that were previously difficult to implement reliably.

Here’s a practical example of a Pod manifest using the new native sidecar functionality. Notice the `restartPolicy` on the `istio-proxy` init container.

apiVersion: v1

kind: Pod

metadata:

name: my-app-with-sidecar

spec:

# The restartPolicy on the init container makes it a sidecar

initContainers:

- name: istio-proxy

image: istio/proxyv2:1.20.0

args: ["proxy", "sidecar", "..."]

# This key field enables the sidecar behavior

restartPolicy: Always

securityContext:

runAsUser: 1337

containers:

- name: my-main-app

image: my-app:1.0

ports:

- containerPort: 8080Kubelet Evented PLEG for Enhanced Performance

The Pod Lifecycle Event Generator (PLEG) is a critical component within the kubelet responsible for monitoring container state changes. In previous versions, PLEG operated on a polling mechanism, periodically checking all containers on a node, which could introduce latency and CPU overhead on nodes with many pods. Kubernetes 1.29 graduates “Evented PLEG” to Beta. This new approach relies on CRI (Container Runtime Interface) streaming endpoints to receive events directly from the container runtime (like containerd or CRI-O) instead of polling. This is a significant piece of Linux performance news for Kubernetes clusters, as it reduces kubelet CPU usage and improves pod startup latency. This change highlights the maturing relationship between Kubernetes and Linux-native runtimes, moving towards a more efficient, event-driven architecture.

Section 2: Advancements in Networking and Kernel-Level Integration

Kubernetes networking is intrinsically tied to the Linux networking stack. Version 1.29 continues the trend of embracing more modern and powerful kernel technologies, particularly eBPF, and refining existing components like kube-proxy.

The Continued Rise of eBPF and Gateway API

While not a feature of Kubernetes core itself, the ecosystem’s rapid adoption of eBPF for networking, observability, and security is heavily influencing development. Projects like Cilium and Calico leverage eBPF to implement Kubernetes networking policies directly within the Linux kernel, bypassing slower and more complex mechanisms like `iptables`. This results in higher performance, better scalability, and more detailed observability. Recent Linux kernel news has been filled with eBPF enhancements, and Kubernetes is the primary beneficiary in the cloud-native space.

Simultaneously, the Gateway API is graduating more of its components to standard channels. This API provides a more expressive and role-oriented way to manage ingress traffic than the older Ingress API. When combined with an eBPF-based CNI (Container Network Interface), the Gateway API can configure advanced traffic routing, load balancing, and security rules that are enforced with kernel-level efficiency. This is a game-changer for anyone following Linux networking news and its application in microservices.

Here is an example of a `CiliumNetworkPolicy` which uses eBPF under the hood to enforce layer 7 rules, something that is difficult and inefficient with traditional `iptables`.

apiVersion: "cilium.io/v2"

kind: CiliumNetworkPolicy

metadata:

name: "api-visibility-rule"

spec:

endpointSelector:

matchLabels:

app: my-frontend

egress:

- toEndpoints:

- matchLabels:

app: my-backend-api

toPorts:

- ports:

- port: "9090"

protocol: TCP

rules:

http:

- method: "GET"

path: "/api/v1/data"Refining kube-proxy and IPv4/IPv6 Dual-Stack

The `kube-proxy` component, responsible for managing service networking, has also seen improvements. There is ongoing work to stabilize the NFTables backend, which is poised to replace the aging `iptables` as the default in many modern Linux distributions like those covered in Debian news and Fedora news. Furthermore, support for IPv4/IPv6 dual-stack networking continues to mature. Kubernetes 1.29 graduates `MaxUnavailable` for dual-stack Services with `LoadBalancer` type, allowing for more graceful rollouts and updates in complex networking environments. This is critical for organizations transitioning to IPv6, a topic of frequent discussion in Linux server news.

Section 3: Evolving Security and Storage Postures on Linux

Security and storage are foundational pillars of any production system. Kubernetes 1.29 introduces changes that align cluster security more closely with established Linux security primitives and enhance the flexibility of storage management.

Pod Security Admission and Linux Security Modules

With PodSecurityPolicies (PSPs) now fully removed, Pod Security Admission (PSA) is the standard for enforcing security contexts in a cluster. PSA defines three standard profiles: `privileged`, `baseline`, and `restricted`. These profiles directly map to Linux security concepts. For example, the `restricted` profile enforces policies that prevent running as root, require read-only root filesystems, and mandate the use of Linux security modules like Seccomp, AppArmor, or SELinux.

This is a major development in Linux security news for Kubernetes users. It standardizes security practices and encourages cluster administrators to leverage the powerful, built-in security features of their chosen Linux distribution. For instance, a cluster running on a Red Hat or Rocky Linux node can leverage its robust SELinux integration, while an Ubuntu-based cluster can use AppArmor profiles to harden workloads. This tight integration makes the underlying OS security model a first-class citizen in the orchestration layer.

Administrators can enforce these profiles at the namespace level. Here’s how you would label a namespace to enforce the `restricted` policy:

# Enforce the latest version of the restricted policy

kubectl label --overwrite ns my-secure-namespace \

pod-security.kubernetes.io/enforce=restricted

# You can also set audit and warn modes for a smoother rollout

kubectl label --overwrite ns my-secure-namespace \

pod-security.kubernetes.io/audit=restricted \

pod-security.kubernetes.io/warn=baselinePersistentVolume Last Phase Transition Time

On the storage front, a subtle but important feature is the addition of `lastPhaseTransitionTime` to PersistentVolumes (PVs). This field records the timestamp of when the PV last changed its phase (e.g., from `Available` to `Bound`). While seemingly minor, this provides crucial observability for storage administrators. It helps in debugging storage issues, tracking volume lifecycle events, and building automation for storage cleanup and management.

This feature complements the robust Container Storage Interface (CSI), which abstracts underlying Linux storage systems. Whether you are using LVM, ZFS, Btrfs, or a network filesystem like NFS, the CSI driver handles the provisioning. This new timestamp adds a layer of metadata that is invaluable for monitoring and automation scripts, a key aspect of modern Linux administration news.

Here is an example of a `StorageClass` manifest that might be used to provision volumes on an `ext4` filesystem using a CSI driver.

apiVersion: storage.k8s.io/v1

kind: StorageClass

metadata:

name: fast-ssd-ext4

provisioner: csi.example.com

parameters:

type: ssd

fsType: ext4

reclaimPolicy: Delete

volumeBindingMode: WaitForFirstConsumer

allowVolumeExpansion: trueSection 4: Best Practices and Navigating the Evolving Ecosystem

Adopting Kubernetes 1.29 successfully requires more than just running `kubeadm upgrade`. It involves aligning your Linux environment and operational practices with the direction of the project.

Choosing the Right Linux Distribution

The choice of Linux distribution for your worker nodes is more important than ever.

- Enterprise Distributions: Systems like Red Hat Enterprise Linux, SUSE Linux Enterprise Server, and their derivatives (AlmaLinux, Rocky Linux) offer long-term support, SELinux integration, and enterprise-grade stability, making them ideal for production workloads.

- Minimalist Distributions: Distros like Alpine Linux or Flatcar Container Linux provide a reduced attack surface and minimal overhead, which is excellent for security-conscious and resource-constrained environments.

- General-Purpose Distributions: Stalwarts like Ubuntu Server and Debian offer a balance of up-to-date packages and stability, with a massive community and excellent support for tools like `containerd` and `microk8s`.

Your choice should be guided by your team’s expertise, your security requirements, and the need for commercial support.

Systemd and Kubelet Management

The kubelet is typically run as a `systemd` service on modern Linux systems. As highlighted in recent systemd news, mastering `systemd` is essential for Kubernetes administration. Ensure your kubelet service files are correctly configured, especially regarding cgroup drivers (`systemd` is the recommended driver). Regularly use `systemctl` and `journalctl` to monitor the health of the kubelet and container runtime.

# Check the status of the kubelet service

systemctl status kubelet

# View the latest logs from the kubelet service

journalctl -u kubelet -f --no-pager

# Check the configured cgroup driver for containerd

grep 'systemd_cgroup' /etc/containerd/config.tomlKernel Version Matters

To take full advantage of features like eBPF, improved cgroup v2 support, and the latest security patches, running a modern Linux kernel is crucial. Keep an eye on Linux kernel news and ensure your distribution’s kernel version is recent enough to support the Kubernetes features you intend to use. This is especially important for networking and security functionalities that rely on newer kernel subsystems.

Conclusion: A Tighter Integration for a Cloud-Native Future

Kubernetes 1.29 “Mandala” is a testament to the deepening, symbiotic relationship between the world’s leading container orchestrator and its foundational operating system, Linux. The enhancements in this release—from native sidecars and event-driven kubelet operations to the continued embrace of eBPF and standardized security profiles—are not just abstract features. They are practical, powerful changes that leverage the very best of the modern Linux kernel and its surrounding ecosystem.

For Linux professionals, this means that expertise in core Linux concepts—process management, networking, security modules, and filesystems—is more valuable than ever. As you plan your upgrade or new cluster deployment, look beyond the Kubernetes changelog and consider how these updates interact with your Linux distribution of choice. By aligning your infrastructure with these trends, you can build more efficient, secure, and resilient systems poised for the future of cloud-native computing. The latest Kubernetes Linux news is clear: the path forward is one of tighter integration and mutual evolution.