Mastering High-Availability SQL on Linux with Kubernetes: A Deep Dive

The New Frontier: Running Stateful SQL Databases in High-Availability Clusters on Linux and Kubernetes

For years, the conventional wisdom in the DevOps and cloud-native communities was to treat container orchestration platforms like Kubernetes as the domain of stateless applications. Databases, the quintessential stateful workloads, were often left to run on dedicated virtual machines or managed cloud services, separated from the containerized application tier. However, this paradigm is rapidly shifting. Recent advancements in the Linux and Kubernetes ecosystems are making it not just possible, but practical and highly advantageous, to run mission-critical SQL databases like PostgreSQL and MySQL directly on Kubernetes, even in complex high-availability (HA) clusters. This evolution is transforming infrastructure management for everything from multi-cloud data centers to IoT and edge computing deployments.

This technical article explores the architecture, implementation, and best practices for deploying highly available SQL databases on Linux-based Kubernetes clusters. We will delve into the core concepts, demonstrate practical SQL operations within this modern context, and provide actionable insights for database administrators, DevOps engineers, and system architects. The foundation of this entire stack remains robust Linux distributions—from Ubuntu news and Red Hat news to community-driven projects like Rocky Linux news—providing the stable kernel and userspace tools necessary for both Kubernetes and the databases it orchestrates.

Section 1: The Architectural Shift – From Pets to Cattle, with StatefulSets

The “pets vs. cattle” analogy has long defined cloud-native thinking. “Cattle” are identical, disposable servers that can be replaced without effort, typical of stateless web frontends. “Pets” are unique, carefully tended servers, like traditional database hosts, that cause a major incident if they go down. The goal of running databases on Kubernetes is not to treat them like disposable cattle, but to build a resilient, automated “petting zoo” where if one pet becomes unhealthy, another is automatically promoted to take its place without manual intervention. This is achieved through several key Kubernetes concepts built on the solid foundation of the Linux kernel’s containerization features.

Core Kubernetes Components for Statefulness

To understand how Kubernetes manages state, we must first look at three critical components:

- PersistentVolumes (PVs): A piece of storage in the cluster that has been provisioned by an administrator. It’s a resource in the cluster just like a CPU or RAM. It abstracts away the underlying storage details, which could be anything from a local SSD on a node running Fedora news to a cloud provider’s block storage like AWS EBS or Google Persistent Disk.

- PersistentVolumeClaims (PVCs): A request for storage by a user. It is similar to a pod consuming node resources; a PVC consumes PV resources. A pod can request specific sizes and access modes (e.g., read/write once).

- StatefulSets: A workload API object used to manage stateful applications. Unlike a Deployment, a StatefulSet maintains a sticky, unique identity for each of its pods. This includes a stable network identifier and stable, persistent storage. If a pod in a StatefulSet fails, a replacement is created with the exact same identity and attached to the same persistent storage, ensuring data continuity.

Defining the Database Schema

Let’s consider a practical example: an IoT platform that collects time-series data from various sensors. The first step, regardless of the underlying infrastructure, is to define a proper schema. A well-designed schema is crucial for performance and scalability, especially in a distributed environment. Here is a basic schema for our sensor data using PostgreSQL syntax.

-- Define a table to store sensor metadata

CREATE TABLE sensors (

sensor_id SERIAL PRIMARY KEY,

device_id VARCHAR(100) NOT NULL UNIQUE,

location VARCHAR(255),

sensor_type VARCHAR(50),

installed_at TIMESTAMPTZ DEFAULT NOW()

);

-- Define a table for the time-series readings

CREATE TABLE sensor_readings (

reading_id BIGSERIAL PRIMARY KEY,

sensor_id INT NOT NULL,

reading_timestamp TIMESTAMPTZ NOT NULL,

temperature_celsius DECIMAL(5, 2),

humidity_percent DECIMAL(5, 2),

-- Establish a foreign key relationship to the sensors table

CONSTRAINT fk_sensor

FOREIGN KEY(sensor_id)

REFERENCES sensors(sensor_id)

ON DELETE CASCADE

);This schema establishes a clear relationship between sensors and their readings. The use of TIMESTAMPTZ (timestamp with time zone) is a best practice for applications that may span multiple geographic locations, a common scenario in IoT and multi-cloud deployments. This structure is the foundation upon which we’ll build our high-availability strategy.

Section 2: Implementing High Availability with Database Operators

Simply running a single database instance in a StatefulSet provides persistence, but not high availability. If the node hosting the database pod fails, Kubernetes will reschedule it, but there will be downtime during the recovery process. True HA requires a multi-instance, clustered configuration, typically with a primary node for writes and one or more replica nodes for reads and failover. Managing this lifecycle—including provisioning, failover, and recovery—is incredibly complex. This is where the Kubernetes Operator pattern shines.

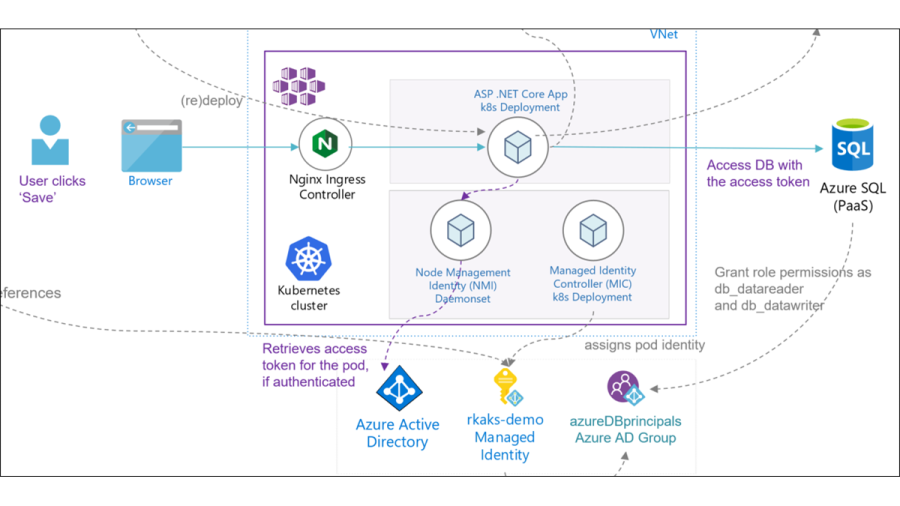

The Role of Kubernetes Operators

An Operator is a custom Kubernetes controller that uses custom resources to manage applications and their components. For databases, an Operator encodes the operational knowledge of a human database administrator into software. For example, a PostgreSQL operator like Patroni or Crunchy Data’s PGO can automate:

- Cluster Bootstrapping: Creating a primary database and configuring streaming replication to new replicas.

- Health Monitoring: Continuously checking the health of the primary and replica instances.

- Automated Failover: If the primary instance fails, the operator will detect it, trigger a leader election among replicas, promote the most up-to-date replica to become the new primary, and reconfigure all other replicas to follow the new primary.

- Endpoint Management: Updating Kubernetes Services to ensure application traffic is always directed to the correct primary (for writes) and replicas (for reads).

Querying in a Clustered Environment

With a primary-replica setup, a common optimization is to route read-only queries to the replicas, reducing the load on the primary instance. This is particularly effective for applications with a high read-to-write ratio, such as dashboards or analytics platforms. Applications connect to a read-only Kubernetes Service that load-balances traffic across all healthy replica pods.

Here’s an example of a typical analytical query that could be safely run against a read replica to find the average temperature and humidity for a specific device in the last 24 hours.

-- This query is ideal for offloading to a read replica

SELECT

s.device_id,

s.location,

AVG(sr.temperature_celsius) AS average_temperature,

AVG(sr.humidity_percent) AS average_humidity,

COUNT(sr.reading_id) AS number_of_readings

FROM

sensors s

JOIN

sensor_readings sr ON s.sensor_id = sr.sensor_id

WHERE

s.device_id = 'device-A1B2-3C4D'

AND sr.reading_timestamp >= NOW() - INTERVAL '24 hours'

GROUP BY

s.device_id, s.location

ORDER BY

s.device_id;This separation of read and write workloads is a cornerstone of scalable database architecture and is seamlessly managed by Kubernetes Services when an operator is in place. This pattern is widely used across various Linux distributions, from Debian news servers to enterprise-grade SUSE Linux news deployments.

Section 3: Advanced Operations: Transactions, Indexing, and Performance

Running a database in a dynamic, orchestrated environment demands careful attention to data integrity and performance. ACID (Atomicity, Consistency, Isolation, Durability) guarantees are paramount, and query performance can be the difference between a responsive application and a failing one.

Ensuring Atomicity with Transactions

Transactions ensure that a series of operations either all succeed or all fail together, maintaining data consistency. This is critically important in a distributed system where a network partition or pod failure could interrupt a multi-step process. All write operations must be sent to the primary database instance to preserve transactional integrity.

Imagine a scenario where a new sensor is installed. We need to add its metadata to the sensors table and also log its first reading. These two operations must be atomic.

-- Start a transaction block

BEGIN;

-- Insert the new sensor metadata. The INSERT...RETURNING clause

-- is a PostgreSQL feature to get the newly generated sensor_id.

INSERT INTO sensors (device_id, location, sensor_type)

VALUES ('device-E5F6-7G8H', 'Warehouse B, Rack 3', 'temp-humidity')

RETURNING sensor_id;

-- Let's assume the previous query returned a sensor_id of 101.

-- Now, insert the initial reading for this new sensor.

INSERT INTO sensor_readings (sensor_id, reading_timestamp, temperature_celsius, humidity_percent)

VALUES (101, NOW(), 21.5, 45.8);

-- If both operations were successful, commit the transaction.

-- If any error occurred, the entire block would be rolled back.

COMMIT;Even during a failover event, a properly configured operator and database will ensure that only fully committed transactions are present on the newly promoted primary, safeguarding your data.

Optimizing Query Performance with Indexing

As the sensor_readings table grows to millions or billions of rows, queries will become slow without proper indexing. An index is a data structure that improves the speed of data retrieval operations on a database table. In our IoT example, we will frequently query readings by sensor and by time. Therefore, a composite index on `(sensor_id, reading_timestamp)` is essential.

-- Create a composite index on the sensor_readings table

-- This will dramatically speed up queries that filter by a specific

-- sensor and a time range, which is our most common query pattern.

CREATE INDEX idx_sensor_readings_sensor_id_timestamp

ON sensor_readings (sensor_id, reading_timestamp DESC);

-- The DESC keyword on reading_timestamp can optimize for queries

-- that look for the most recent readings, a very common use case.

-- Let's analyze the query plan to see the index in action.

EXPLAIN ANALYZE

SELECT temperature_celsius

FROM sensor_readings

WHERE sensor_id = 101

AND reading_timestamp >= NOW() - INTERVAL '1 day'

ORDER BY reading_timestamp DESC

LIMIT 10;Creating the right indexes is a critical part of database administration. In a Kubernetes environment, this doesn’t change. Schema migrations and index creation should be managed through controlled processes, often integrated into a CI/CD pipeline, a key practice in modern Linux DevOps news.

Section 4: Production-Ready Best Practices and Optimization

Deploying a stateful database on Kubernetes in production requires more than just a functional operator. It requires a holistic approach to storage, networking, monitoring, and security.

Storage and Filesystem Considerations

Choose your storage wisely. For databases, you need a StorageClass that provisions high-IOPS persistent storage. On the underlying Linux nodes, which might be running anything from CentOS news derivatives to Arch Linux news, using a robust and performant filesystem like XFS or ext4 is standard. Tools related to Linux LVM news and Linux RAID news can be relevant for managing storage on bare-metal nodes.

Monitoring and Observability

You cannot effectively manage what you cannot see. A robust monitoring stack is non-negotiable.

- Prometheus: The de facto standard for metrics collection in the Kubernetes world. Use a database exporter (e.g., `postgres_exporter`) to scrape metrics like transaction throughput, replication lag, cache hit ratio, and active connections.

- Grafana: Visualize the metrics collected by Prometheus in meaningful dashboards. This allows you to set up alerts for critical conditions, such as high replication lag or low disk space.

- Logging: Aggregate logs from all database pods using a stack like ELK (Elasticsearch, Logstash, Kibana) or Loki. This is essential for troubleshooting issues, especially during and after a failover event. Many Linux logs and journalctl news principles apply here.

Backup and Disaster Recovery

High availability protects against instance or node failure, not data corruption, accidental deletion, or a full cluster disaster. A solid backup strategy is essential. Many database operators include features for scheduling backups to S3-compatible object storage. Tools like Borgbackup or Restic, popular in the Linux backup news sphere, can also be scripted to perform backups from within the container, providing an additional layer of protection.

Conclusion: The Future is Stateful on Linux and Kubernetes

The convergence of mature container orchestration, the robust Linux kernel, and the sophisticated Kubernetes Operator pattern has fundamentally changed the landscape for stateful applications. Running high-availability SQL databases on Kubernetes is no longer a bleeding-edge experiment but a viable and powerful production strategy. It offers a unified control plane for both stateless and stateful workloads, simplifying operations, enabling multi-cloud portability, and bringing the benefits of automation and self-healing to the core of the data tier.

By leveraging StatefulSets for stable identity, Operators for expert automation, and a suite of cloud-native tools for observability and management, organizations can build resilient, scalable, and modern data platforms. The journey requires careful planning around storage, networking, and data protection, but the operational efficiencies and architectural consistency gained are immense. As the Linux cloud news continues to evolve, this tight integration between the operating system, container orchestration, and complex stateful services will only become more critical for building the next generation of applications.